What are Machine Learning Tools?

Machine Learning Tools are a set of software applications and services that facilitate the implementation and deployment of machine learning models. These tools aid in data preprocessing, algorithm selection, training, and model evaluation.

Purpose of Machine Learning Tools

Machine Learning Tools simplify and automate the process of creating and deploying machine learning models. They accelerate the development process by offering a standardized framework and reusable components.

Components of Machine Learning Tools

Several components make up machine learning tools. These include data preparation tools, algorithm libraries, visualizations for assessing model performance, and deployment tools.

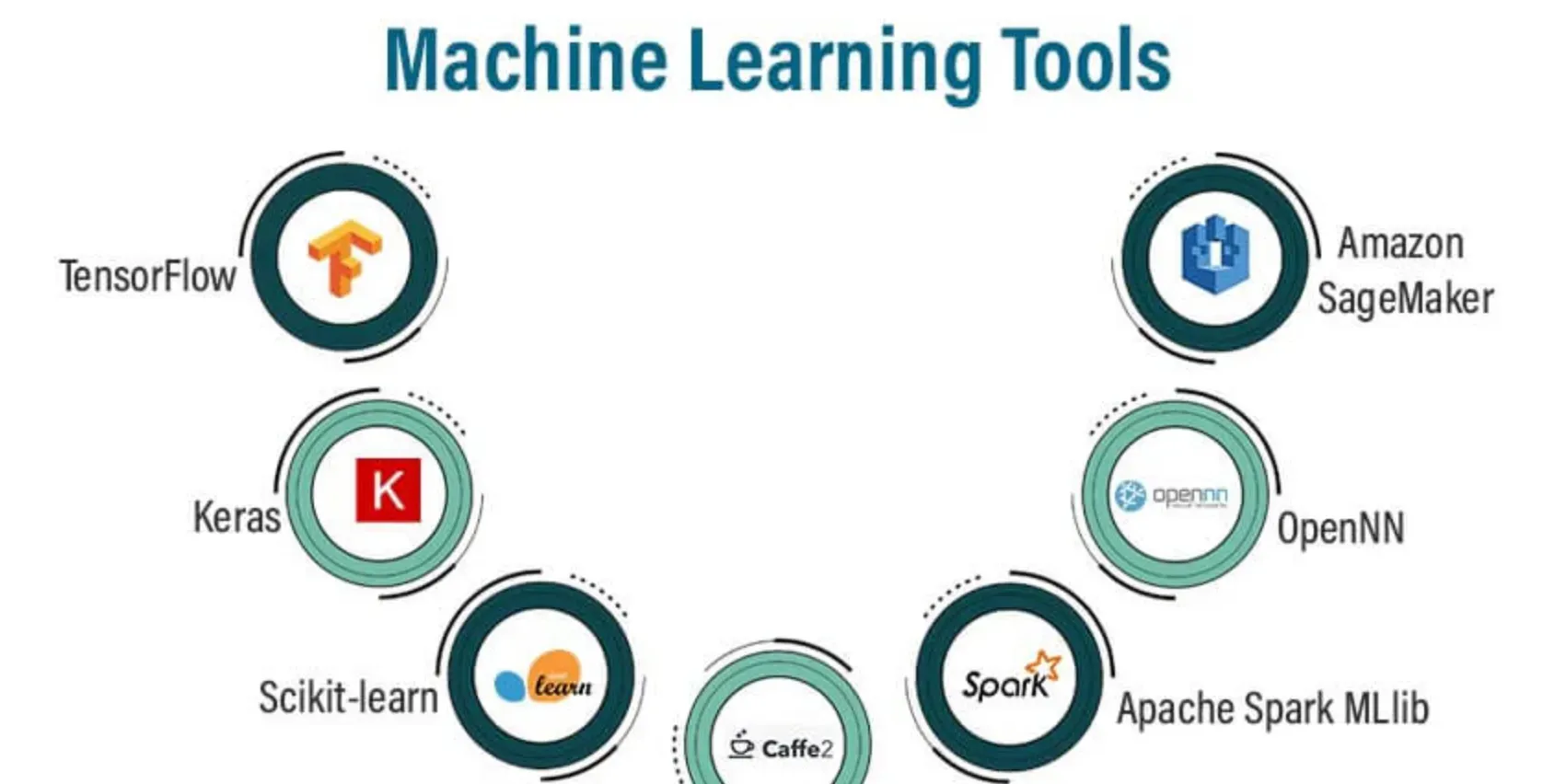

Examples of Machine Learning Tools

Prominent examples of machine learning tools include TensorFlow, Scikit-Learn, Keras, and PyTorch. These tools provide comprehensive, flexible platforms for developing and deploying machine learning algorithms.

Why Use Machine Learning Tools?

This section explores the benefits and reasons one would use machine learning tools for their data analysis and machine learning tasks.

Accelerating Development

Machine Learning Tools speed up the development process by providing libraries of algorithms and preprocessing techniques. This eliminates the need to build these functions from scratch.

Simplifying Complex Processes

Machine learning involves complex mathematical and statistical processes. Machine learning tools simplify these complexities, making the development of models accessible to non-experts.

Enhancing Accuracy and Efficiency

These tools provide efficient implementations of machine learning algorithms. Using them helps to optimize computations, improving the accuracy and performance of the models.

Facilitating Scalability

Machine Learning Tools are scalable. They can handle increasingly larger datasets and more complex computations. This makes them incredibly valuable for use in big data environments.

When to Use Machine Learning Tools?

Here we discuss the specific circumstances or scenarios that may necessitate the use of machine learning tools.

Large Datasets

When dealing with large datasets, machine learning tools are beneficial as they can process big data efficiently and quickly.

Complex Algorithms

When implementing complex machine learning algorithms, these tools come with ready-made functions and libraries, easing the development process.

When Requiring Visualization

Some machine learning tools provide visualization capabilities, helping users understand the performance and behavior of their models.

Real-time Predictions

Machine learning tools that support real-time predictions are useful for applications where immediate decisions based on data are needed.

Where are Machine Learning Tools Used?

Here, we delve into various sectors where machine learning tools play a crucial role to enhance performance and outcomes.

Healthcare

In the healthcare domain, these tools aid in drug discovery, patient diagnosis, and risk factor identification among others.

Finance

Machine learning tools help to predict stock market trends, detect fraudulent transactions, and automate trading activities in finance.

E-commerce

In e-commerce, these tools are pivotal in providing personalized product recommendations and improving customer experiences.

Autonomous Vehicles

In the autonomous vehicle industry, machine learning tools aid in developing algorithms for situation awareness, decision making, and path planning.

How to Choose a Machine Learning Tool?

Choosing the right machine learning tool can significantly impact the success of your project. This section illustrates the criteria to consider when choosing a tool.

Understand Your Requirements

It's crucial to assess what you require from a machine learning tool. Consider your project's scope, complexity, and the size of the dataset.

Evaluate Tool Capabilities

Determine whether the tool can effectively handle your data, support the required algorithms, and meet your project's specific requirements.

Considers Ease of Use

Evaluate how user-friendly the tool is. Is it easy to learn and use? Does it have clear documentation and a strong user community?

Check Scalability

Consider whether the tool can handle large datasets and complex computations. This is especially important for big data projects.

How to Use Machine Learning Tools?

Here’s how we use machine learning tools for the effective processing of data to get insights.

Step 1: Data Preprocessing

Data preprocessing is arguably one of the most critical steps in the machine learning pipeline. It involves preparing the raw data to feed into a machine learning algorithm.

Cleaning Data

First, you clean the data by handling missing values. Depending on the nature of your data, you might choose to fill in missing values with a predetermined value like zero or the mean value of the given variable (mean imputation). Alternatively, you might remove data entries that contain missing values.

Normalizing

Once the data is clean, it may be necessary to normalize it, especially when the dataset contains attributes with varying scales. Normalizing ensures all data is on a similar scale, preventing attributes with larger values from dominating over those with smaller ones.

Data Transformation

Finally, you may need to apply specific transformations to your data. For instance, converting categorical data into a numerical format, known as one-hot encoding, is often necessary before using machine learning algorithms.

Step 2: Choosing and Implementing an Algorithm

The next step in the process is to choose a suitable machine learning algorithm based on the problem type and the data.

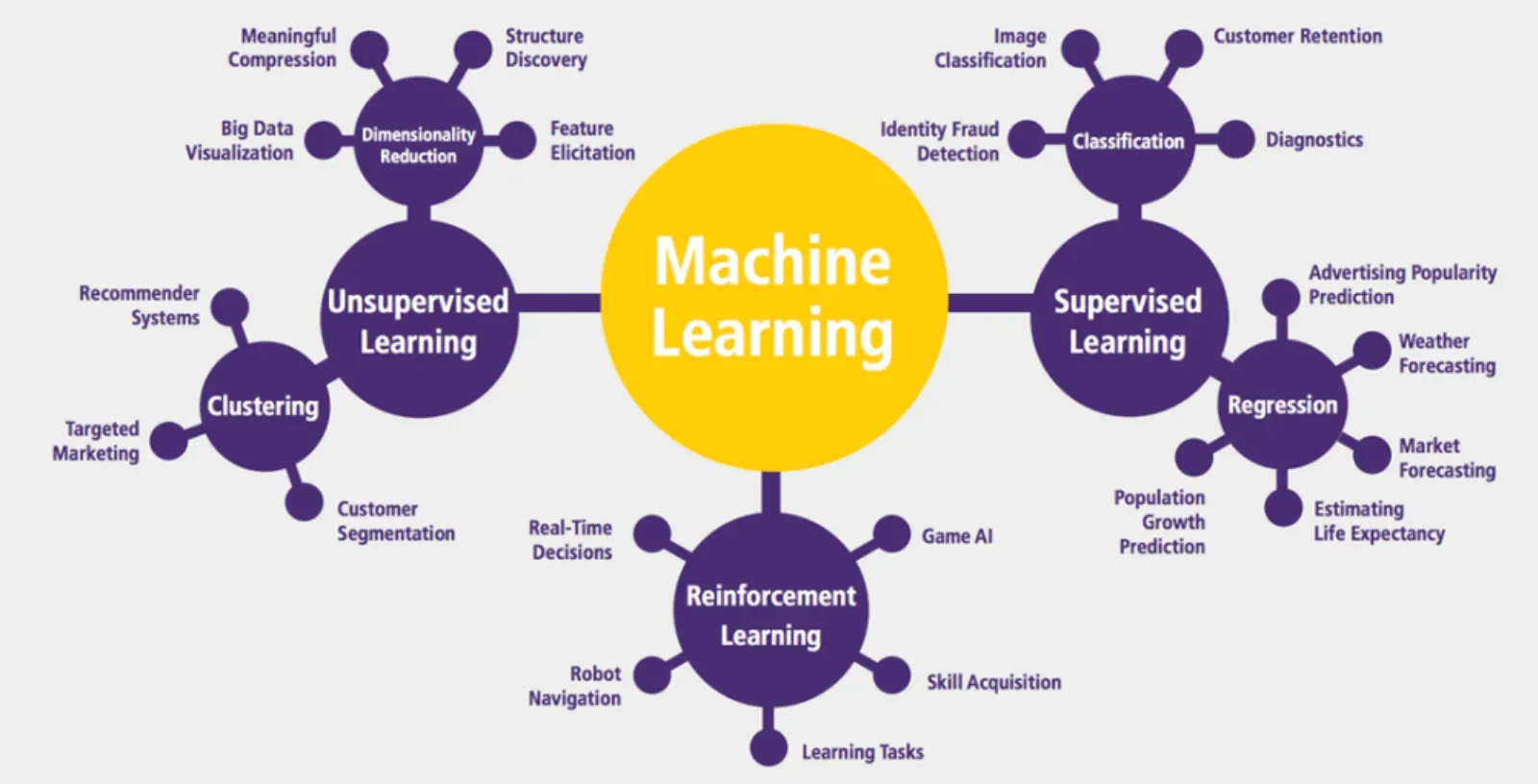

Problem Type

Supervised learning problems are those where the expected output is known, such as predicting house prices or classifying emails as spam or not spam. Unsupervised learning problems, on the other hand, don't have a known output. Instead, these types of problems focus on discovering underlying patterns or structures within the data.

For example, if you are working on an image classification task, you could use an algorithm from the supervised learning family, like the convolutional neural network (CNN).

Algorithm Implementation

Once you've chosen your algorithm, you need to implement it. Machine learning tools offer libraries of pre-built algorithms, eliminating the need to code from scratch.

Step 3: Training the Model

Training involves feeding your preprocessed data into the algorithm to create a predictive model.

Model Parameters and Hyperparameters

As the model trains, it learns parameters from the data. In machine learning, parameters are variables the model learns on its own through training. However, there's another type of variable the model doesn't learn - hyperparameters. These are constants whose value you set before training begins.

Optimization

During training, the goal is to minimize the difference between the model’s predictions and the actual values, known as the loss. You can use different optimization algorithms like Stochastic Gradient Descent (SGD) to achieve this.

Step 4: Evaluating and Deploying the Model

After training, it's essential to check how well your model is performing.

Evaluation Metrics

Evaluation metrics like accuracy, precision, recall, or mean squared error help assess model performance. The chosen metric should align with your problem type and business objectives.

Model Deployment

Once the model has met the desired performance level, it's ready for deployment. This could mean integrating it into a larger system, like a recommendation system on a website, or it could involve deploying the model to a production environment where it can start making real-time predictions. Machine learning tools often offer easy-to-use interfaces that simplify the deployment process.

Who Uses Machine Learning Tools?

Machine learning tools aren’t just for data scientists. A variety of professionals use these tools for different purposes.

Data Scientists

Data scientists use these tools for developing predictive models and discovering patterns and insights from data.

ML Engineers

Machine Learning Engineers use these tools to design, build, and deploy machine learning models.

Researchers

Researchers use machine learning tools to perform complex analyses and conduct experiments in areas like genetics, climate studies, and social sciences.

Developers

Web and software developers use these tools to incorporate machine learning capabilities into software applications and services.

Glossary of Machine Learning Tools Terms

Let's identify and define some commonly used terms in machine learning tools.

Algorithm Library

An Algorithm Library includes a catalog of pre-coded machine learning algorithms that users can apply directly without having to code from scratch.

Data Preprocessing

Data Preprocessing involves cleaning and transforming raw data into a suitable, standardized format that can be used for machine learning.

Feature Extraction

Feature Extraction is the process of selecting or combining the most relevant attributes from raw data to use in machine learning models.

Model Training

Model Training involves feeding data into a machine learning algorithm, enabling the model to learn patterns and make predictions.

Model Evaluation

Model Evaluation is the process of assessing the predictive performance and accuracy of a trained machine learning model.

Overfitting

Overfitting is a modeling error that occurs when a machine learning model learns the training data too well, to the point where it performs poorly on new, unseen data.

Underfitting

Underfitting is when a machine learning model cannot accurately capture the underlying patterns of the data, resulting in poor performance on both the training data and new data.

Regularization

Regularization is a technique to prevent overfitting by adding a penalty term to the loss function, encouraging simpler models.

Supervised Learning

Supervised Learning is a type of machine learning where the model is trained on a labeled dataset, meaning the correct outputs are already known and provided during training.

Unsupervised Learning

Unsupervised Learning is a type of machine learning where the model is trained on an unlabeled dataset, and must identify patterns and structures without any prior knowledge of the correct outputs.

Decision Tree

A Decision Tree is a type of machine learning algorithm that makes decisions based on conditions, structured in a tree-like model of decisions and their possible consequences.

Neural Network

A Neural Network is a set of algorithms modeled after the human brain, designed to recognize patterns in data.

Clustering

Clustering is an unsupervised learning method used to group data points with similar characteristics together.

Classification

Classification refers to a supervised learning approach for predicting class labels for given data inputs.

Regression

Regression is a type of supervised learning algorithm used to predict a continuous outcome variable based on one or more input variables.

Frequently Asked Questions (FAQs)

What is the best machine learning tool?

The choice of a “best” machine learning tool largely depends on individual project needs, data, and the user’s programming proficiency. However, tools like TensorFlow, Keras, and Scikit-learn are widely used.

Are machine learning tools difficult to learn?

The learning curve for machine learning tools can vary significantly, depending on the tool itself and the user's programming skills and knowledge of machine learning concepts.

Can I use machine learning tools without knowing coding?

Some machine learning tools do not require coding knowledge and are designed to be user-friendly for beginners. Examples include RapidMiner and Knime.

Are machine learning tools expensive?

Many powerful machine learning tools are open-source and free to use. Examples include TensorFlow, Keras, and Scikit-learn.

Can machine learning tools be used for data visualization?

Yes, tools like Matplotlib and Seaborn integrate well with other machine learning tools for data visualization. They help to understand data patterns and model performance.