What are Machine Learning Algorithms?

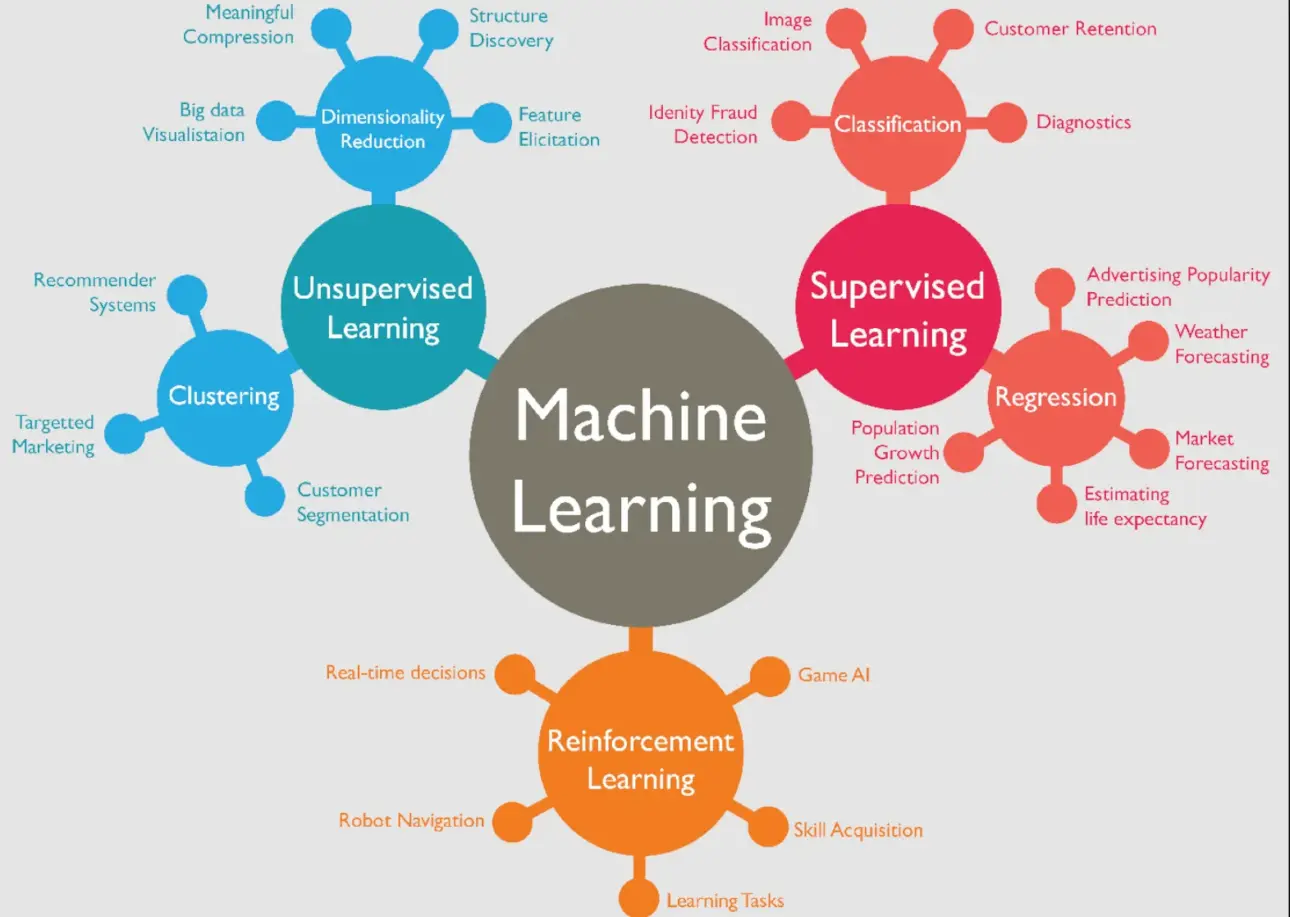

Machine learning algorithms are a set of rules and statistical techniques used by machines to learn patterns from data and make informed decisions. These algorithms can broadly be categorized into supervised learning, unsupervised learning, semi-supervised learning, and reinforcement learning, with each category serving specific use cases.

Why are Machine Learning Algorithms Used?

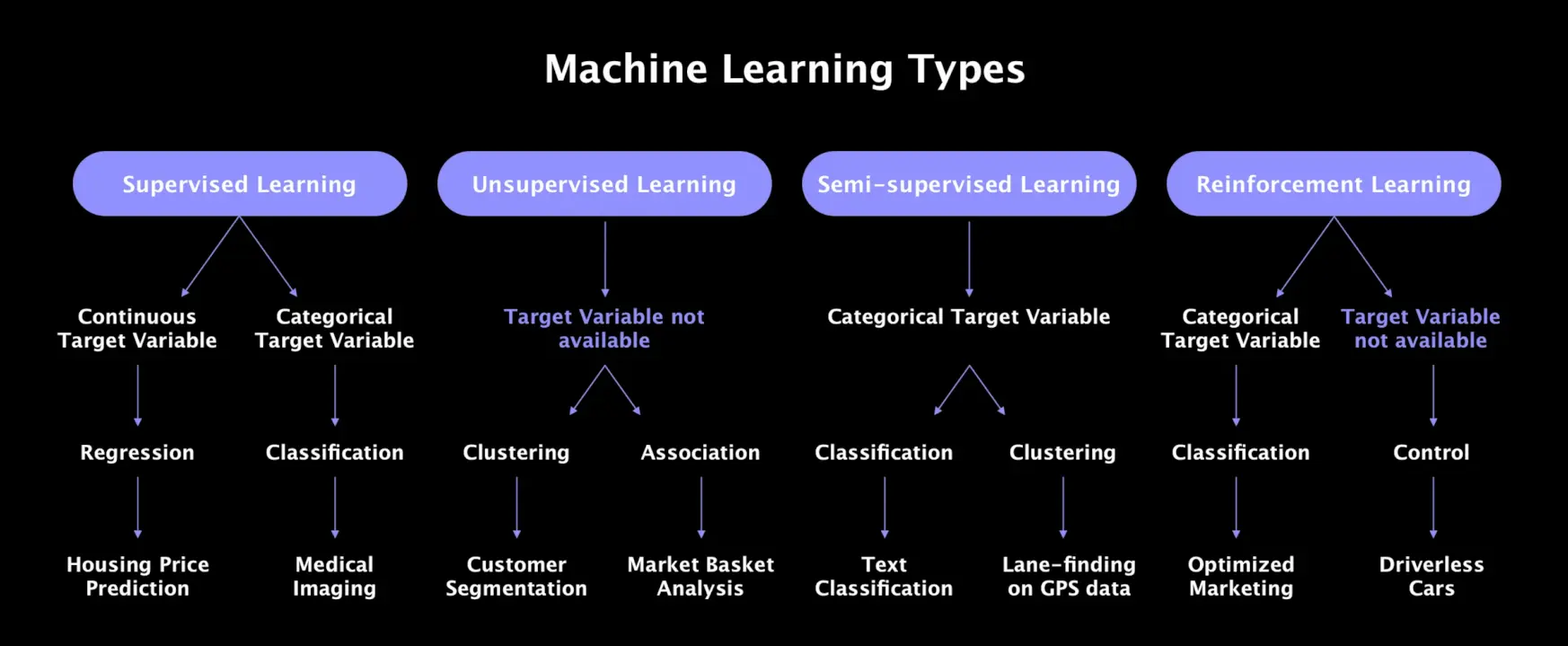

Machine learning algorithms are used to automate complex tasks that require pattern recognition, data analysis, or decision-making. They help in accurately predicting outcomes, detecting anomalies, automating repetitive tasks, and improving user experiences—which can significantly enhance business operations and strategic initiatives.

Who Uses Machine Learning Algorithms?

Organizations across sectors—ranging from healthcare, marketing, and finance to technology, retail, and transportation—use machine learning algorithms. Scientists, engineers, data analysts, product developers, and marketers are among the key professionals leveraging machine learning algorithms to solve complex problems and innovate.

When are Machine Learning Algorithms Applied?

Machine learning algorithms are applied when there's a need to build predictive models, identify hidden patterns, automate processes, or make informed decisions without explicit programming. This can involve a variety of tasks—from predicting customer behavior and personalizing user experiences to detecting fraud and improving logistic operations.

Where are Machine Learning Algorithms Implemented?

Machine learning algorithms are implemented across a myriad of platforms and applications. This could include streaming services for personalized recommendations, healthcare applications for patient diagnostics, financial systems for risk assessment, or e-commerce environments for predicting sales—essentially, anywhere data-driven insights can create value.

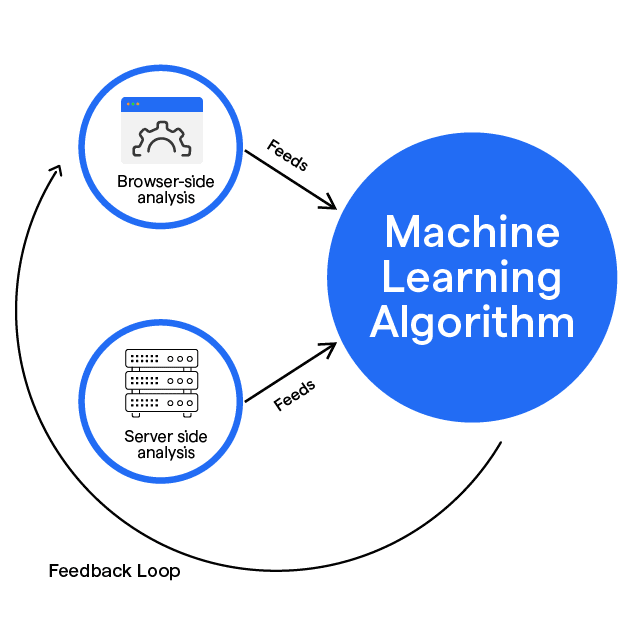

Types of Machine Learning Algorithms

In this section, we'll explore different types of Machine Learning algorithms, giving you a more comprehensive understanding of how these methods contribute to the creation of intelligent systems.

Supervised Learning

Supervised Learning algorithms are designed to learn by example. The name "supervised" comes from the method of training the algorithm. Specifically, a supervisor provides labeled training data to the model, guiding the learning process.

Unsupervised Learning

Unsupervised Learning algorithms, on the other hand, operate independently to discover interesting structures in the data. They don't require the supervision of models and thus have been widely used for exploratory data analysis.

Semi-supervised Learning

Semi-supervised Learning algorithms lie somewhere in between supervised and unsupervised learning. While they use labeled data for training, they also use unlabelled data to improve learning accuracy.

Reinforcement Learning

Reinforcement Learning is a type of dynamic programming that trains algorithms using a system of reward and punishment. An RL algorithm learns by interacting with its environment, making choices, and receiving corrections

Ensemble Learning

Ensemble Learning combines several base models in order to produce one optimal predictive model. It seeks to amplify the strength of the individual models and mitigates their weaknesses.

Popular Machine Learning Algorithms

In this section, we will dive deeper into some popular machine learning algorithms:

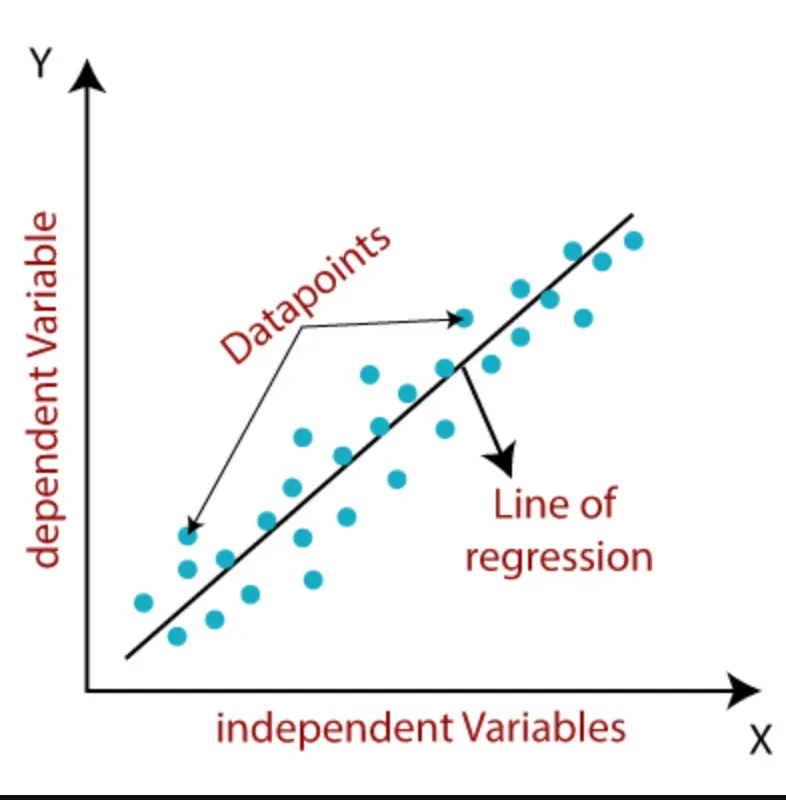

Linear Regression

Linear regression is a supervised learning algorithm that models the relationship between a continuous target variable and one or more independent variables. By fitting a linear equation to the data, it can make predictions based on new input.

Support Vector Machine (SVM)

SVM is a supervised learning algorithm widely used for classification tasks. It can also be employed for regression tasks by utilizing the kernel trick to make data points linearly separable in a higher dimensional space.

Naive Bayes

Naive Bayes is a supervised learning algorithm primarily used for classification tasks. It assumes that features are independent of each other, even though this may not hold true in real-life scenarios.

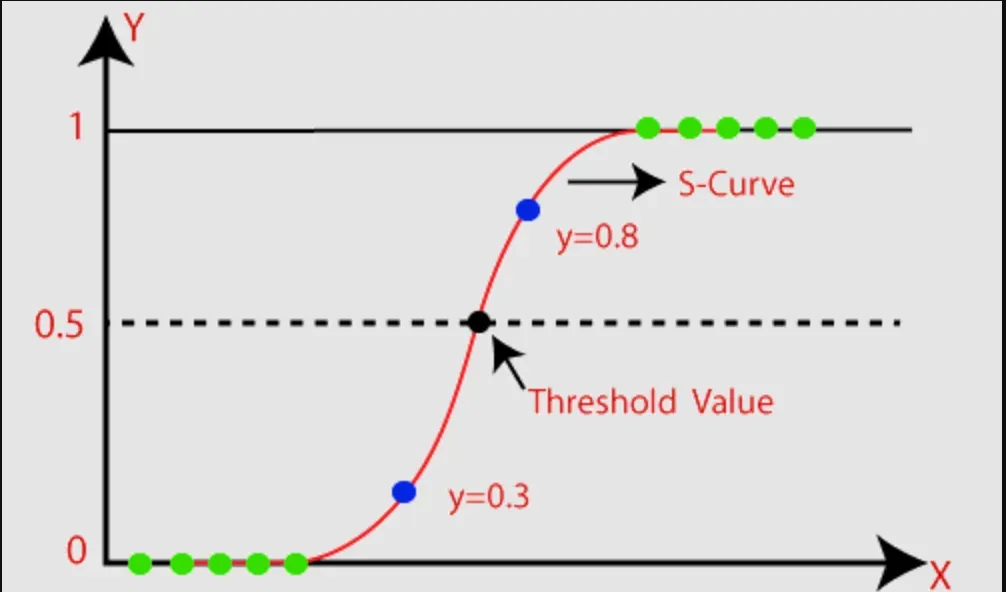

Logistic Regression

Logistic regression, despite its name, is a supervised learning algorithm commonly used for binary classification problems. It leverages the logistic function to perform the classification task effectively.

K-Nearest Neighbors (kNN)

kNN is a supervised learning algorithm capable of handling both classification and regression tasks. It determines the value or class of a data point based on its nearest neighbors.

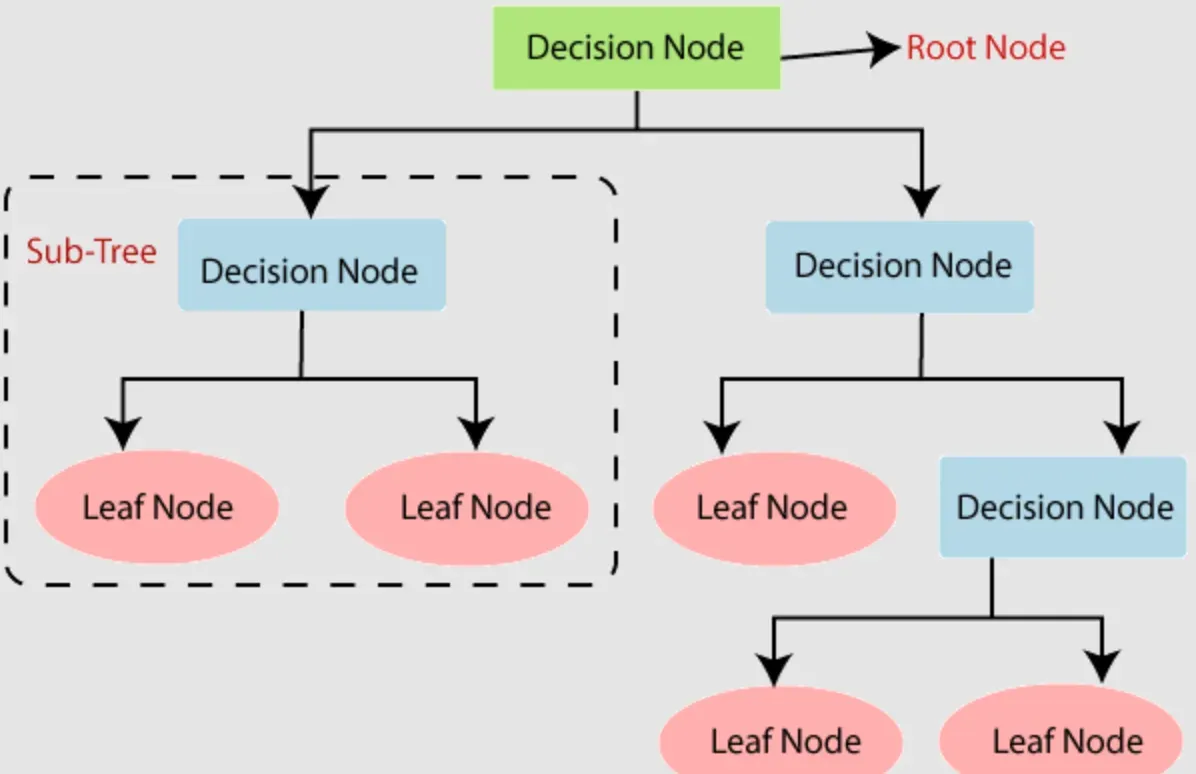

Decision Trees

Decision trees build models by iteratively asking questions to partition data. By selecting questions that increase purity, the algorithm gains more information about the dataset. Splits that result in higher purity and lower impurity provide valuable insights.

Random Forest

Random forest is an ensemble algorithm comprising multiple decision trees. It uses a technique called bagging to create parallel estimators. When used for classification, the final result is based on the majority vote of the decision trees.

Gradient Boosted Decision Trees (GBDT)

GBDT is an ensemble algorithm that combines individual decision trees through boosting. Boosting involves sequentially connecting weak learners, with decision trees serving as the weak learners in this case.

K-Means Clustering

Clustering algorithms group data points based on similarities or dissimilarities. K-means clustering seeks to find the underlying structure in a dataset by identifying distinct clusters.

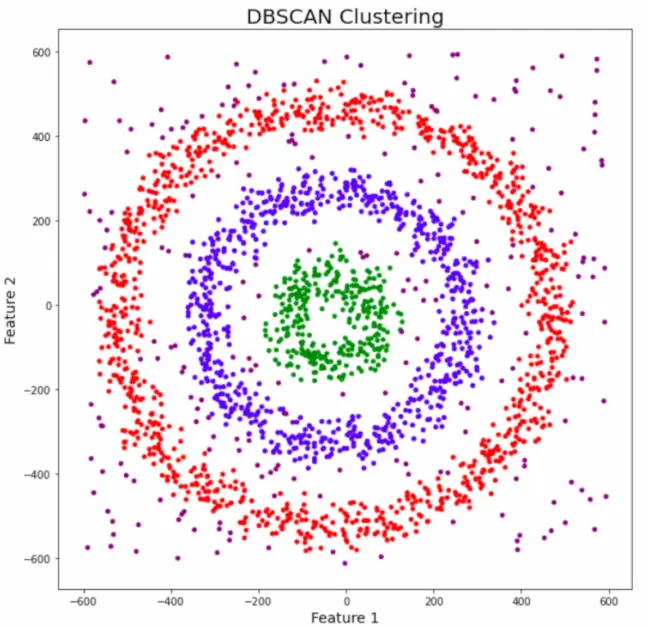

DBSCAN Clustering

DBSCAN clustering is efficient in handling arbitrary shaped clusters and detecting outliers. It stands for density-based spatial clustering of applications with noise, enabling the identification of clusters with irregular shapes.

Principal Component Analysis (PCA)

PCA is a dimensionality reduction algorithm that derives new features from existing ones while retaining as much information as possible. It is widely used as a preprocessing step for supervised learning algorithms.

These are just a few examples of machine learning algorithms. There are many other algorithms available, each with its own unique characteristics and use cases.

Frequently Asked Questions (FAQs)

What is the concept of overfitting in machine learning algorithms?

Overfitting occurs when a model learns the training data too well but fails to generalize to new, unseen data. It typically happens when the model is overly complex or when the training data is limited.

Can machine learning algorithms handle missing or incomplete data?

Yes, machine learning algorithms can handle missing or incomplete data. Techniques like imputation, where missing values are estimated based on other variables, can be used to fill in the gaps.

What are some evaluation metrics used to assess the performance of machine learning algorithms?

Common evaluation metrics include accuracy, precision, recall, F1 score, and area under the receiver operating characteristic curve (AUC-ROC). These metrics help measure the model's effectiveness in making correct predictions.

How do ensemble learning algorithms work?

Ensemble learning combines multiple individual models to make more accurate predictions. Techniques like bagging (bootstrap aggregating) and boosting (weighting misclassified instances) are commonly used in ensemble algorithms.

Are machine learning algorithms susceptible to bias or discrimination?

Machine learning algorithms can be susceptible to bias and discrimination if the training data is biased or contains discriminatory patterns. Careful data preprocessing and algorithmic fairness measures can help mitigate these issues.