What is Supervised Learning?

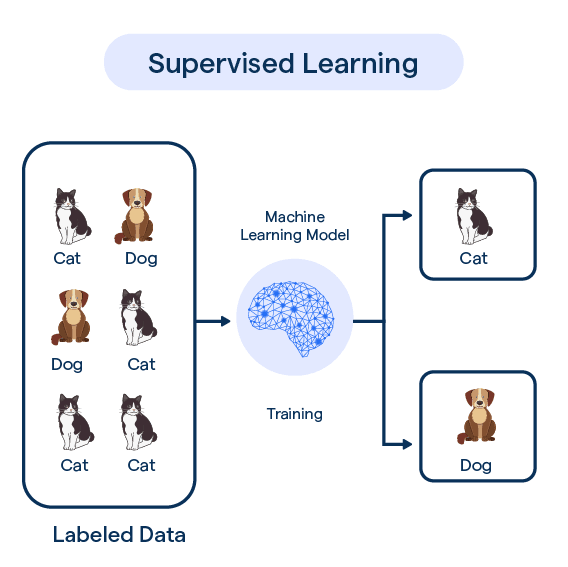

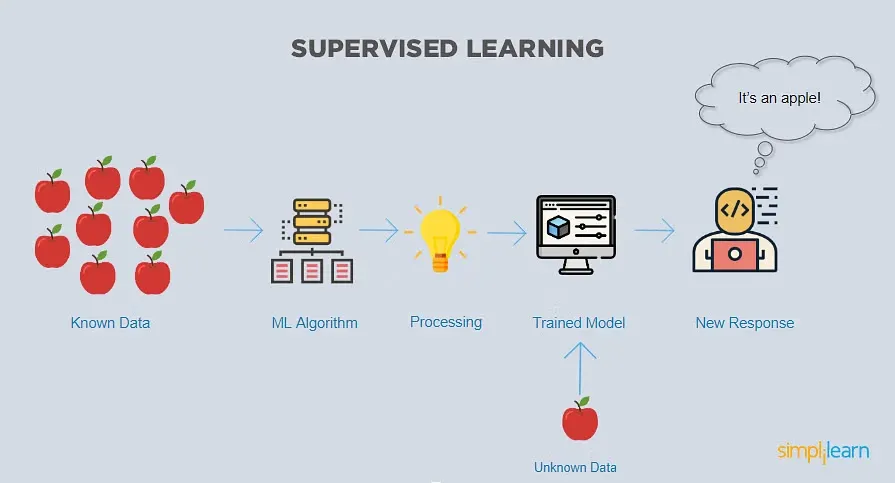

Supervised learning is a machine learning technique in which models are trained on labeled data. Let's break it down with an example.

Say we want to train a model to recognize images of cats and dogs. We will first compile a labeled dataset - thousands of cat and dog images with the correct labels attached. We feed this to the model during training.

By examining the labeled examples, the model learns to recognize patterns and features associated with each class. Like fluffy fur and pointed ears for cats. Over many training iterations, the model continues to improve its accuracy in classifying new images correctly.

This is the essence of supervised learning - supplying the model with correctly labeled training data so it can learn the correlations between inputs and target outputs.

Classification and regression tasks employ supervised learning. It produces highly accurate models but requires large training datasets. The key is providing sufficient labeled examples for the model to learn the complex relationships.

Why Supervised Learning?

In this section, we'll delve into the reasons for the widespread use of Supervised Learning in machine learning, examining its benefits and key use-cases.

Predictive Power

Supervised Learning models have the advantage of high predictive power. By learning from labeled datasets, these models can make accurate predictions or classifications on unseen data, making them vital in various domains like finance, healthcare, and marketing.

Interpretability

The nature of Supervised Learning provides a clear mapping between input and output based on learned patterns, thus offering good interpretability.

This transparency is important in many sectors where understanding of how a decision was made is crucial.

Extensive Algorithms Availability

Supervised Learning caters to a plethora of available algorithms, ranging from simple linear regression to complex neural networks, that can be applied to a wide range of problems.

This variety allows for versatile problem-solving capabilities.

Real-time Learning and Improvement

One of the remarkable features of Supervised Learning is its ability to learn and improve over time.

As more labeled data becomes available, the model updates and refines its predictions, continually enhancing its performance.

Practical and Broad Applicability

Supervised Learning finds usage in concrete, real-world applications where the relationship between input and output is critical.

These include but are not limited to, email filtering, customer segmentation, credit scoring, and medical diagnostics.

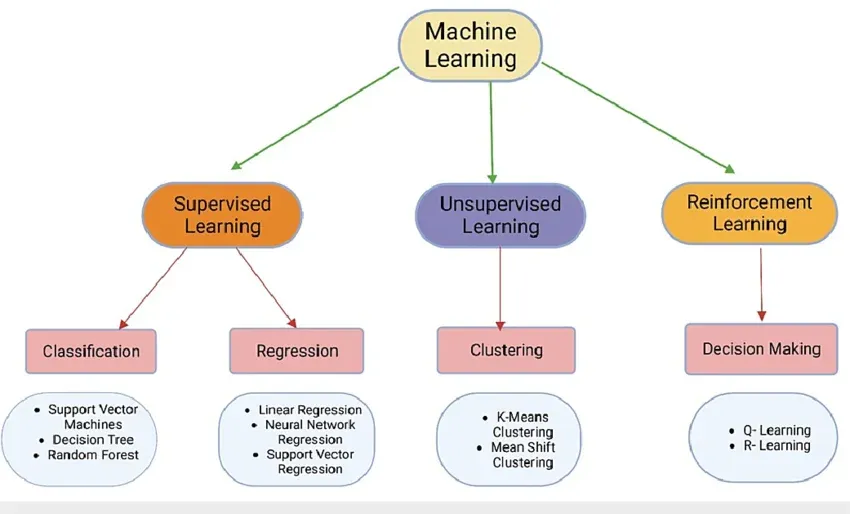

Types of Supervised Learning

In this section, we'll delve into different types of supervised learning, a pivotal part of machine learning where models learn from labeled training data.

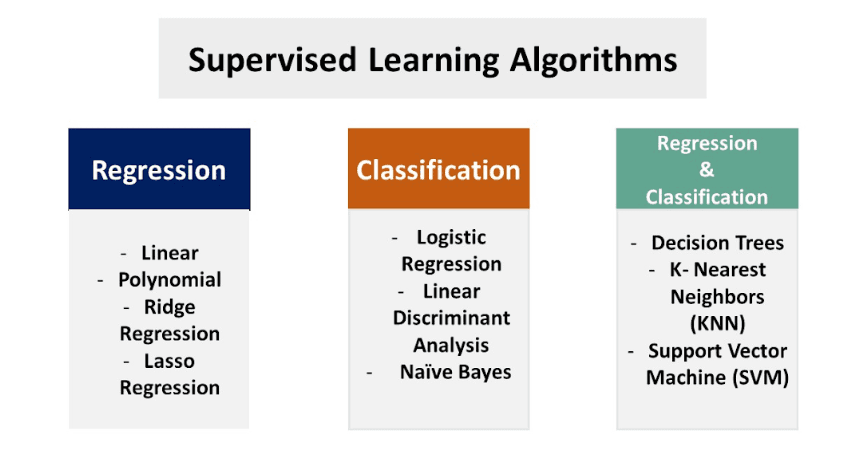

Regression

In regression supervised learning, the model is trained to predict continuous values such as temperature or price.

This technique is applicable when the output variable is a real or continuous value.

Classification

Classification, another type of supervised learning, is used for predicting discrete values. It entails grouping the inputs into two or more classes based on learned patterns from labeled data.

Support Vector Machines

Support Vector Machines (SVM) is a powerful supervised learning method often used for classification and regression challenges.

It works by defining a hyperplane that separates data into different classes.

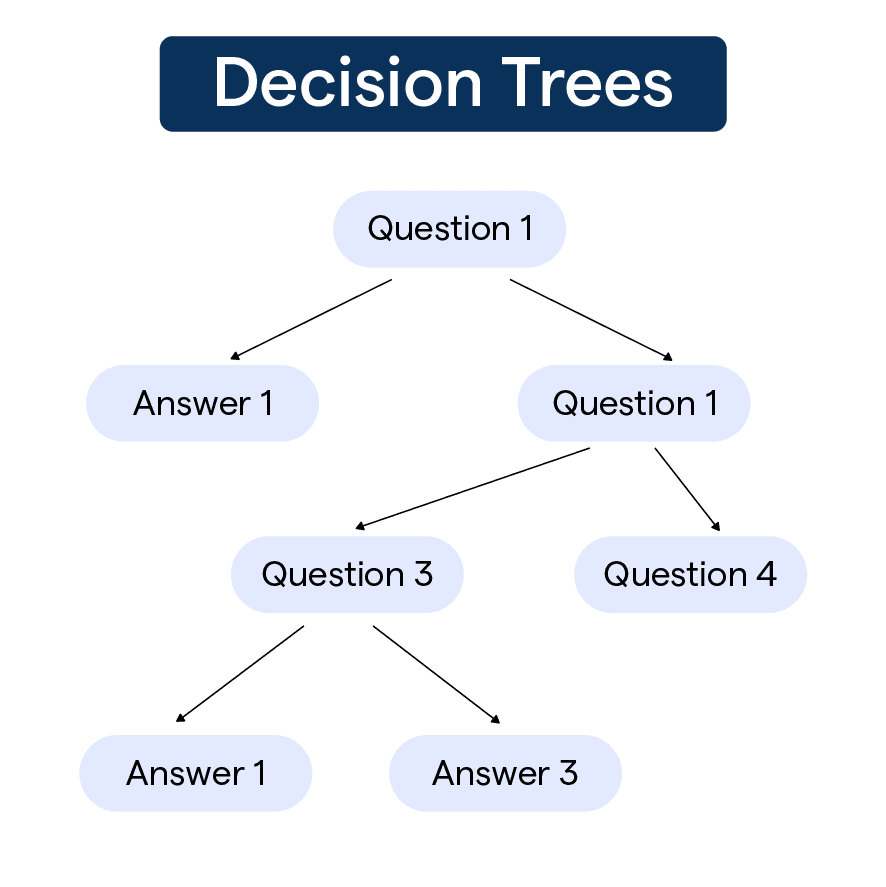

Decision Trees

Decision Trees are flowchart-like structures where each node denotes a test on an attribute, each branch represents an outcome of the test, and each leaf node holds a class label.

They're commonly used for both classification and regression tasks.

Random Forests

Random Forests are an ensemble learning method that operates by constructing multiple decision trees during training and outputting the class that is the mode of the classes (classification) or mean prediction (regression) of the individual trees.

By understanding these different types of supervised learning, artificial intelligence practitioners can select the most fitting model according to their specific problem, thereby achieving more accurate and reliable outcomes.

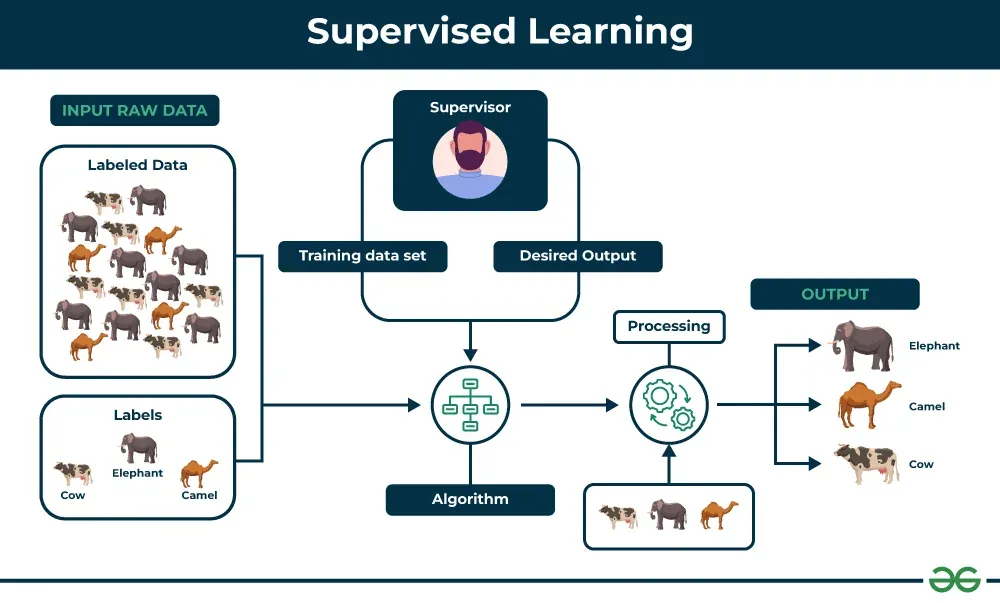

How does Supervised Learning Work?

In this section, we'll explore the inner workings of Supervised Learning, its various components, and how it contributes to effective machine learning models.

Training Data and Labels

Supervised Learning relies on labeled training data – input data with corresponding known output values or labels – to teach machine learning algorithms how to generalize patterns from the data.

Selecting an Algorithm

Choosing an appropriate machine learning algorithm is crucial for effective Supervised Learning.

Depending on the complexity of the problem and the desired outcome, various algorithms, such as Linear Regression, Decision Trees, or Support Vector Machines, can be utilized.

Model Training

The training process encompasses feeding the selected algorithm with the labeled data to adjust its internal parameters.

By optimizing these parameters, the model learns to recognize patterns and connections between input features and output labels.

Evaluating Performance

To ensure the effectiveness of the Supervised Learning model, evaluating its performance on unseen data is crucial.

Splitting the labeled dataset into separate training and testing sets allows for effective assessment before deploying the model.

Model Deployment and Prediction

After training and evaluation, the Supervised Learning model can be deployed for use in real-world applications.

Once integrated, the model uses its learned knowledge to provide predictions or classifications based on new input data and continuously improve over time as more data becomes available.

Supervised Learning Algorithms

In this section, we'll explore the variety of Supervised Learning Algorithms and their unique properties.

Logistic Regression

Logistic Regression focuses on estimating the probability of an event occurring based on previous data. It's widely used for binary classification problems where the outcome is either 'Yes' or 'No'.

Decision Tree

A Decision Tree algorithm presents decisions and their possible outcomes in a tree-shaped model, perfect for tackling classification and regression problems by making structured, sequential decisions.

Support Vector Machine (SVM)

SVM is a robust classification algorithm that separates datasets into classes.

It constructs a hyperplane or set of hyperplanes in a high-dimensional space that can be used for regression, classification, or other tasks.

Naive Bayes

Naive Bayes classifiers apply Bayes' theorem with the assumption of independence between every pair of features, often used in text classification due to their ability to handle an extremely large number of features.

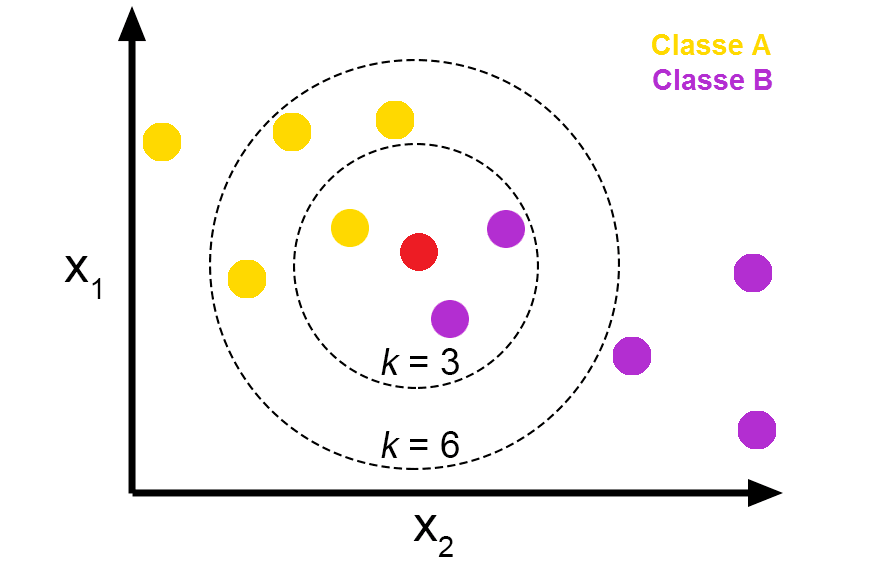

K-Nearest Neighbors (KNN)

KNN operates on the principle of proximity. It classifies new cases based on their similarity to available cases. In KNN, data points that are near to each other are considered as similar.

All these algorithms have their unique strengths and are useful in solving various kinds of supervised learning problems.

Evaluation Techniques in Supervised Learning

To assess the performance of supervised learning models, various evaluation techniques are used, including:

- Accuracy: Accuracy measures the proportion of correct predictions made by the model out of the total predictions made.

- Precision and Recall: Precision measures the proportion of correctly predicted positive instances out of the total predicted positive instances, while recall measures the proportion of correctly predicted positive instances out of the total actual positive instances.

- F1-score: The F1-score is the harmonic mean of precision and recall. It provides a balanced measure of model performance.

- Confusion Matrix: A confusion matrix is a table that summarizes the performance of a classification model. It shows the number of true positives, true negatives, false positives, and false negatives.

Supervised Learning Applications

In this section, we will discuss several practical applications where supervised learning methods have demonstrated their potency in problem-solving and decision-making.

- Spam Detection: Supervised learning algorithms such as Naive Bayes are largely utilized in email filtering systems to detect and block spam messages based on known patterns.

- Credit Scoring: Credit scoring is another domain where supervised learning excels. It helps identify potential loan defaulters by analyzing user data and previous loan information.

- Medical Diagnosis: In the healthcare domain, supervised learning aids in diagnosing diseases by analyzing patient records and learning patterns associated with various medical conditions.

- Customer Segmentation: Supervised learning is employed in market segmentation as well, where it helps understand customer behavior and preferences to tailor personalized strategies.

- Fraud Detection: Financial institutions often leverage supervised learning to detect fraudulent transactions. By analyzing patterns in historical transaction data, the model can flag any suspicious activities.

Supervised learning, with its ability to infer from known examples, plays a vital role in numerous diverse application areas.

Challenges in Supervised Learning

In this section, we'll delve into several problematic aspects that arise when working with supervised learning methods in machine learning.

Insufficient Training Data

Lack of quality training data with appropriate labeled examples can lead to suboptimal model performance.

Overfitting and Underfitting

Overfitting occurs when a model learns training data too well, affecting its ability to generalize. Conversely, underfitting means the model fails to learn intricate patterns, resulting in a weak prediction.

Class Imbalance

When one class is heavily underrepresented in the dataset, algorithms may develop a prediction bias, making it difficult to sustain adequate classification performance.

Noisy Data and Outliers

Issues like data inconsistencies, incorrect labels, or outliers can significantly impact model performance and call for diverse pre-processing strategies.

Computational Complexity

Certain supervised learning algorithms require substantial computational resources, which could slow down or constrain the model's training and implementation, especially in large-scale real-world applications.

Frequently Asked Questions (FAQs)

What is Supervised Learning?

Supervised learning is a type of machine learning where an algorithm learns from labeled data to make predictions or classify new, unseen data.

What are the types of Supervised Learning?

The two main types of supervised learning are classification, which involves predicting discrete outputs, and regression, which deals with predicting continuous outputs.

How does Supervised Learning work?

Supervised learning involves collecting and preprocessing data, selecting a model, training the model, and evaluating the model's performance with a separate set of test data.

What are some popular Supervised Learning Algorithms?

Some popular algorithms for supervised learning include linear regression, logistic regression, decision trees, and random forests.

What are the ethical considerations in Supervised Learning?

Bias and fairness, privacy concerns, and transparency and interpretability are important ethical considerations to keep in mind when working with supervised learning.