What is a Neural Network?

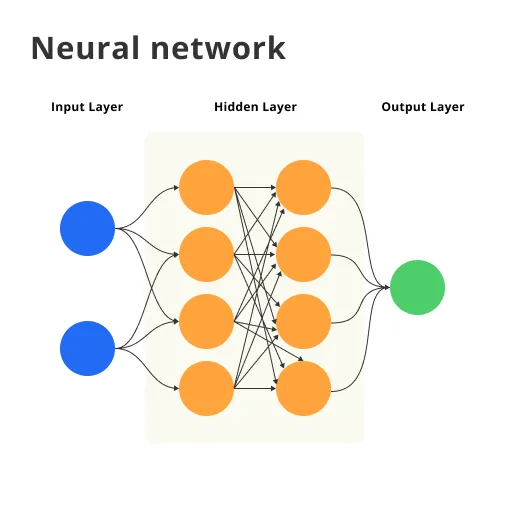

A neural network is a computing system inspired by the structure and functioning of the human brain. It consists of interconnected layers of artificial neurons, which process and transmit information through weighted connections. Neural networks are designed to learn patterns and relationships in data, making them particularly effective for tasks like image recognition, natural language processing, and speech recognition.

Neural networks are a subset of machine learning, and they learn by adjusting the weights of their connections based on the errors they make during training. This learning process is called backpropagation. As neural networks become deeper, with more layers and neurons, they can capture increasingly complex patterns, leading to the emergence of deep learning models that excel in various artificial intelligence applications.

Why use a Neural Network?

Pattern Recognition

Neural networks excel at recognizing complex patterns in data, making them ideal for tasks like image recognition, speech recognition, and natural language processing.

Adaptability

Neural networks can adapt to new data and learn from it, allowing them to improve their performance over time and handle changing environments or requirements.

Handling Noisy Data

Neural networks can effectively deal with noisy or incomplete data, making them suitable for real-world applications where data quality may be imperfect.

Parallel Processing

Neural networks can leverage parallel processing capabilities of modern hardware, such as GPUs, enabling faster computation and more efficient training.

Generalization

Neural networks can generalize from their training data to make predictions on previously unseen data, making them useful for a wide range of applications.

Robustness

Neural networks are resilient to the failure of individual neurons or connections, ensuring that their overall performance remains stable even with minor disruptions.

Where are Neural Networks used?

Image Recognition and Processing

Neural networks excel in image recognition tasks, such as object detection, facial recognition, and image synthesis, enabling applications like autonomous vehicles and security systems.

Natural Language Processing

Neural networks are widely used in natural language processing (NLP) tasks, including sentiment analysis, machine translation, and chatbot development, enhancing communication and understanding.

Speech Recognition

Neural networks enable speech recognition in applications like voice assistants, transcription services, and call center automation, making voice interactions more efficient and accurate.

Recommender Systems

Neural networks power recommender systems used by e-commerce platforms, streaming services, and social media, providing personalized content and product suggestions based on user preferences.

Medical Diagnosis and Analysis

Neural networks assist in medical diagnosis and analysis by detecting patterns in medical images, genomic data, or electronic health records, improving diagnostic accuracy and patient care.

Financial Market Analysis

Neural networks are employed in financial market analysis for tasks like fraud detection, credit scoring, and algorithmic trading, helping businesses make informed decisions and manage risk.

Suggested Reading:

Evolutionary Computation

Who uses Neural Networks?

Tech Companies

Technology companies like Google, Facebook, and Amazon use neural networks for various applications, including search engines, social media algorithms, and recommendation systems.

Healthcare Industry

Hospitals, researchers, and medical device manufacturers employ neural networks for tasks such as medical imaging, diagnostics, and personalized treatment planning.

Financial Institutions

Banks, investment firms, and insurance companies use neural networks for fraud detection, risk assessment, portfolio management, and financial forecasting.

Retail and E-commerce

Retailers and e-commerce platforms leverage neural networks for supply chain optimization, customer segmentation, targeted marketing, and sales forecasting.

Automotive Industry

Automakers and autonomous vehicle developers utilize neural networks for tasks like object detection, path planning, and decision-making in self-driving cars.

Researchers and Academia

Academic researchers and institutions use neural networks to advance knowledge in fields like artificial intelligence, linguistics, neuroscience, and psychology.

How to implement a Neural Network?

Choose the Network Architecture

Select an appropriate neural network architecture for your problem, such as feedforward, recurrent, or convolutional networks. The choice depends on the type of input data, the complexity of the problem, and the desired output.

Prepare the Data

Gather and preprocess your data to ensure it is in a suitable format for the neural network. This may involve normalizing, scaling, or augmenting the data to improve the network's performance and generalization capabilities.

Initialize Weights and Biases

Randomly initialize the weights and biases of the neural network. Proper initialization is essential for effective training, as it can affect the speed of convergence and the final performance of the network.

Define the Loss Function

Choose a loss function that measures the difference between the network's predictions and the actual target values. Common loss functions include mean squared error for regression tasks and cross-entropy for classification problems.

Train the Network

Train the neural network using an optimization algorithm, such as gradient descent or a variant like Adam or RMSprop. The optimizer adjusts the weights and biases to minimize the loss function, iteratively improving the network's performance on the training data.

Validate and Fine-tune

Evaluate the trained neural network on a separate validation dataset to assess its generalization capabilities. Fine-tune the network's hyperparameters, such as learning rate, batch size, or the number of hidden layers, to optimize its performance on both training and validation data.

Neural Network vs Machine Learning

Foundational Concepts

Machine learning is a broader field encompassing various techniques and algorithms, while neural networks are a specific subset of machine learning inspired by the human brain's structure.

Learning Mechanisms

Traditional machine learning algorithms typically rely on feature engineering and statistical methods, whereas neural networks learn hierarchical representations through interconnected layers of neurons.

Model Complexity

Neural networks, especially deep learning models, can be more complex and computationally intensive compared to some traditional machine learning algorithms, like decision trees or linear regression.

Handling Unstructured Data

Neural networks excel at handling unstructured data, such as images, audio, or text, while traditional machine learning algorithms often require structured data in the form of tabular datasets.

Interpretability

Machine learning models like decision trees or logistic regression are generally more interpretable and explainable, while neural networks can be considered "black boxes" due to their complex architectures.

Use Cases

Both neural networks and traditional machine learning algorithms have their strengths and weaknesses, making them suitable for different tasks. For example, neural networks are preferred for image recognition, while machine learning algorithms like random forests might be better for tabular data analysis.

Challenges with Neural networks

Data Requirements

Neural networks require large amounts of labeled data for training, which can be challenging to collect, label, and preprocess. Insufficient or poor-quality data may lead to suboptimal performance or overfitting.

Computational Complexity

Training neural networks, especially deep ones, can be computationally expensive, requiring powerful hardware like GPUs or TPUs. This can make the development process slower and more resource-intensive, particularly for large-scale problems.

Model Interpretability

Neural networks are often considered "black boxes" due to their complex internal structure, making it difficult to understand how they arrive at specific predictions. This lack of interpretability can be a concern in applications where transparency is crucial.

Hyperparameter Tuning

Selecting the right hyperparameters, such as learning rate, network architecture, and activation functions, can be challenging and time-consuming. Inefficient hyperparameter choices can lead to poor performance or slow convergence during training.

Overfitting

Neural networks are prone to overfitting, especially when trained on small or noisy datasets. Overfitting occurs when the model captures noise in the data, leading to high performance on the training set but poor generalization to new data.

Local Minima and Vanishing Gradients

During training, neural networks may get stuck in local minima, preventing them from reaching the global minimum of the loss function. Additionally, the vanishing gradient problem, where gradients become too small to update the weights effectively, can hinder learning in deep networks.

TL;DR

Neural networks are a powerful type of machine learning algorithm that are modeled after the structure and function of the human brain. They have several advantages over traditional algorithms, including their ability to handle complex and nonlinear relationships in data, and their ability to learn from large datasets. However, they also have limitations, including their tendency to overfit to training data and their requirement for large amounts of data and computational resources. Despite these limitations, neural networks are expected to have a significant impact on industries and society in the coming years.

Frequently Asked Questions (FAQs)

What are neural networks?

Neural networks are computing systems inspired by the human brain, designed to recognize patterns and make decisions through learning from data.

How do neural networks learn?

Neural networks learn by adjusting their internal parameters, called weights and biases, through a process called training, which involves feeding them labeled data.

What are the types of neural networks?

Common types of neural networks include feedforward, recurrent, convolutional, and long short-term memory (LSTM) networks, each suited for different tasks.

What is a recurrent neural network?

A recurrent neural network (RNN) is a type of artificial neural network designed to process sequential data by retaining information from previous inputs. It's widely used in natural language processing, time series analysis, and other tasks where context and temporal dependencies are important.

What are the applications of neural networks?

Neural networks have diverse applications, including image recognition, natural language processing, speech recognition, recommendation systems, and game playing.

What are the limitations of neural networks?

Limitations of neural networks include the need for large amounts of training data, high computational requirements, and difficulties in interpreting their decision-making processes.