Introduction

In the rapidly evolving landscape of natural language processing (NLP), large language models (LLMs) have emerged as game-changers. After all it is pushing the boundaries of what is possible in areas such as text generation, summarization, and question answering.

Among the latest and most powerful LLMs is Falcon. It is a model developed by AI research company Anthropic. Falcon has garnered significant attention due to its impressive performance and unique architecture.

As the adoption of LLMs continues to accelerate, with the global natural language processing market projected to reach $49.9 billion by 2030 (Source: Grand View Research, 2023), it becomes crucial to understand how Falcon compares to other prominent language models such as GPT-3, PaLM, and BLOOM.

These models have already demonstrated their capabilities in various applications, from creative writing to code generation, and have been widely adopted by businesses and developers.

This article will provide an in-depth comparative analysis of Falcon LLM against these other language models, evaluating their strengths, weaknesses, and unique features.

By exploring their performance on benchmarks, architectural differences, and real-world use cases, we aim to provide valuable insights to help readers make informed decisions when choosing the best language model for their specific needs.

Continue reading as this article will serve as a comprehensive guide, equipping you with the knowledge to navigate the ever-evolving landscape of natural language processing technologies.

Understanding LLMs

Language Models are a class of machine learning algorithms that are designed to analyze and understand natural language - just as a human would.

More specifically, they are trained on vast amounts of text data to identify patterns and relationships between different words and phrases.

LLMs or Large Language Models, are an advanced form of language models. It employs deep learning algorithms like Neural Networks to train on even larger and more complex data sets.

The key feature of LLMs is their ability to generate coherent and grammatically correct text, given a set of instructions. This is attributed to its excellent ability to recognize and predict patterns in speech. Thus resulting in the production of natural-sounding text that mimics human speech patterns.

As such, LLMs find application in a variety of scenarios, from translation and summarization, to text completion and even dialogue generation.

How LLMs are Trained and What They Can Do

LLMs are trained on vast quantities of text data, usually sourced from the internet. This data is then used to train the neural networks that underpin the models.

During training, the algorithm "learns" to predict the likelihood of a given word or phrase appearing in the context of a particular sentence or document.

Neural Networks are particularly well-suited to dealing with sequential data. Thus meaning that they can process text data word-by-word, resulting in a more efficient and accurate model.

Once the model is trained, it can perform a wide variety of tasks such as text generation, translation, and text summarization.

In text generation, the model receives a prompt or starting phrase and then generates a full-text output, using the patterns learned during training. This text can be used in a wide range of applications, from chatbots to speech recognition and even virtual assistants.

Real-World Applications of LLMs

One popular application of LLMs is in the creation of chatbots and virtual assistants. Using LLMs, developers can build chatbots that can understand natural language and respond in kind, making for a more human-like interaction.

Similarly, virtual assistants like Siri or Alexa rely on LLMs to parse and understand spoken commands, This allows them to perform a wide range of tasks from setting reminders to ordering food.

Another application of LLMs is in text generation and summarization. For example, LLMs can be used to summarize long documents into a few key points.

Similarly, LLMs can be used for the creation of spam filters, sentiment analysis, and even the creation of product reviews.

Key Players in the LLM Landscape

The evolution of large-scale LLMs has attracted the attention of researchers and developers across the world. With Falcon LLM setting new standards for natural language processing, it is good to keep in mind other key players in the field.

GPT-3

GPT-3 or Generative Pre-trained Transformer 3 is widely regarded as one of the most advanced LLMs known to date.

Developed by OpenAI, GPT-3 employs a transformer-based architecture, which enables it to process vast amounts of training data. With 175 billion parameters, GPT-3 is currently the largest and most sophisticated language model.

One of GPT-3's most significant strengths is its versatility. It can perform a range of tasks such as text generation, question and answering, and even translation with considerable accuracy. One of GPT-3's most notable applications is its use in creating chatbots that can mimic human-like conversation.

Megatron-Turing NLG

Megatron-Turing NLG is another notable LLM, known for its abilities in natural language generation (NLG).

Developed by NVIDIA, Megatron-Turing NLG boasts over one billion parameters, making it one of the most potent models for NLG.

One of Megatron-Turing NLG's key features is its ability to generate naturally sounding text based on a given prompt or input.

For instance, it can be used for document summarization or sentence recommendation, among other applications.

Comparison Table Between Falcon LLM, GPT-3 and Megatron-Turning NLG

The table below summarizes the key details for Falcon LLM, GPT-3, and Megatron-Turing NLG:

LLM | Creator/Developer | Parameters | Training Data |

Falcon LLM | Adarga.ai | 680 million | Customized but relatively small |

GPT-3 | OpenAI | 175 billion | Web scraped data from various sources |

Megatron-Turing NLG | NVIDIA | 1.3 billion | Web scraped data from various source |

It's worth noting that although GPT-3 has the most significant number of parameters, Falcon LLM and Megatron-Turing NLG are no less capable models.

Falcon LLM's uniqueness lies in its customization, which enables it to suit the specific requirements of an industry.

In contrast, Megatron-Turing NLG's focus on NLG makes it an excellent choice for creating human-like chatbots and text generation applications.

Falcon LLM: Strengths and Weaknesses

Falcon LLM is a rising contender in the landscape of large-scale language models. This section will delve into the strengths and limitations of Falcon LLM, shedding light on what makes it stand out and where it might fall short.

Strengths of Falcon LLM

The strengths of Falcon LLM are the following:

Efficiency

One of the primary strengths of Falcon LLM is its efficiency. With a model size of 680 million parameters, it strikes a balance between effectiveness and computational resources required for training and deployment.

This efficiency allows for faster processing and lower infrastructure costs, making it an attractive choice for various applications.

Focus on Comprehension and Learning

Falcon LLM places a strong emphasis on comprehension and learning.

Leveraging deep learning algorithms, it has been trained on customized data to understand specific domains or industries. This focused approach enhances its ability to comprehend and generate natural language text within those specific contexts.

Open-Source Nature

Falcon LLM's open-source nature is another noteworthy feature.

With an open-source model, developers and researchers have access to the code and its underlying architecture. This transparency fosters collaboration and innovation within the development community, enabling the model to continually improve and adapt to emerging needs.

And, if you want to begin with chatbots but have no clue about how to use language models to train your chatbot, then check out the NO-CODE chatbot platform, named BotPenguin.

With all the heavy work of chatbot development already done for you, BotPenguin allows users to integrate some of the prominent language models like GPT 4, Google PaLM and Anthropic Claude to create AI-powered chatbots for platforms like:

Limitations of Falcon LLM

The limitations of Falcon LLM are the following:

Smaller Model Size

Compared to some of its competitors, Falcon LLM has a smaller model size.

While 680 million parameters still allows for robust language processing, larger models like GPT-3 with 175 billion parameters may have an advantage in terms of capturing a more comprehensive understanding of language.

The smaller model size of Falcon LLM can be a limiting factor in certain use cases that require a broader knowledge base.

Potentially Less Diverse Capabilities

Given its customized nature, Falcon LLM may have a narrower scope of capabilities compared to more general-purpose language models.

While it excels in specific domains or industries it has been trained on, its adaptability to a wide range of tasks outside its specialized area may not be as extensive.

Users seeking a highly versatile language model for diverse applications might need to consider these limitations.

Comparing Falcon LLM with Other LLMs

Now, let's compare Falcon LLM with other large-scale language models and evaluate their performance in relation to Falcon LLM's strengths.

Efficiency

When it comes to efficiency, Falcon LLM's smaller model size allows for faster processing and lower resource requirements.

For example, GPT-3, with its massive 175 billion parameters, is a highly accurate model but demands significantly more computational resources.

Falcon LLM's efficiency becomes a notable advantage when there is a need for real-time or resource-constrained applications.

Comprehension and Learning

Falcon LLM's customized training data enables it to excel in specific domains.

For instance, in the financial industry, Falcon LLM's training with finance-specific texts equips it with a deep understanding of financial jargon.

Thus making it well-suited for tasks like financial document analysis or risk prediction. This domain-specific comprehension sets Falcon LLM apart from general-purpose models like GPT-3 or Megatron-Turing NLG.

Open-Source Nature

Falcon LLM's open-source nature encourages continuous community collaboration and improvement.

The open-source aspect allows developers to fine-tune the model according to their specific needs and also contributes to the development of additional pre-trained models tailored to unique industries or tasks.

In comparison, proprietary models like GPT-3 may have limitations in terms of community-driven modifications and customization.

Suggested Reading:

Falcon 40b: The Future of AI Language Modeling

Use Cases Where Falcon LLM Excels

Falcon LLM's efficiency and specialization make it an optimal choice for certain applications.

For instance, in customer support chatbots, Falcon LLM's ability to comprehend industry-specific language and its efficiency in real-time chat interactions make it a strong candidate.

Similarly, tasks involving sensitive data analysis, such as healthcare or legal domains, can benefit from Falcon LLM's customization and adherence to specific data privacy regulations.

However, it's important to acknowledge that Falcon LLM may not be the ideal choice for scenarios where a broader range of language understanding is required or when working with extensive and diverse datasets.

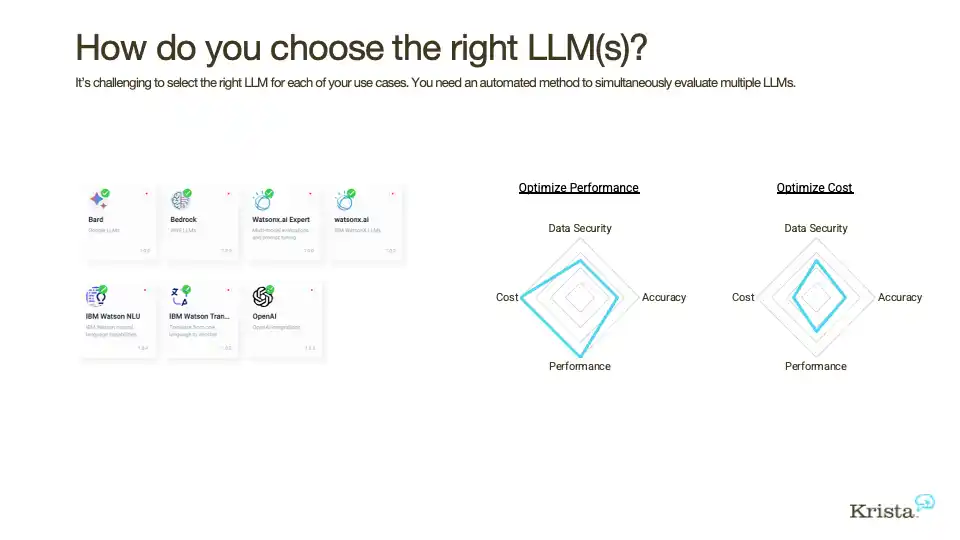

Choosing the Right LLM: Considerations

Choosing the right large-scale language model (LLM) depends on your specific needs and tasks. In this section, we will explore important factors to consider when selecting an LLM that aligns with your requirements.

Task Requirements

First and foremost, evaluate the specific tasks you need the LLM to perform.

Different LLMs excel in different areas. Are you looking for a general-purpose model that can handle a wide range of language processing tasks?

Or do you require a specialized model that is trained for a specific domain or industry? Identifying your task requirements will help narrow down the options and ensure the LLM you choose aligns with your objectives.

Accessibility

Consider whether the LLM you are evaluating is open-source or commercially available.

Open-source models, like Falcon LLM, provide transparency and the opportunity for customization and collaboration within the developer community.

On the other hand, commercially available models, such as GPT-3, may offer additional support, services, and pre-built applications that can simplify implementation.

Evaluate your preferences and resources to determine which accessibility option best suits your needs.

Suggested Reading:

Falcon 40B vs GPT-3: Which Language Model is Better?

Computational Resources

Assess the computing power available to you. Large-scale language models can be computationally expensive.

Models like GPT-3, with billions of parameters, require substantial resources for training and deployment.

If you have limited computational resources, a smaller model like Falcon LLM may be more suitable, offering a balance between efficiency and performance.

Consider the scalability and infrastructure requirements when deciding on an LLM that fits your resource constraints.

Ethical Considerations

Be mindful of ethical considerations and potential biases inherent in LLMs

Language models learn from vast amounts of data, which may inadvertently reflect societal biases or prejudices.

Understand the limitations and potential biases of the LLMs you are considering. Look for models that have undergone rigorous fairness checks and evaluations.

Additionally, consider your data sources and ensure diverse representation to mitigate bias in your specific use case.

Evaluating Suitability

To evaluate which LLM is most suitable for your needs, consider the following steps:

Research: Gather information about available LLMs, their capabilities, and their limitations. Read documentation, blog posts, and research papers to get a comprehensive understanding of each LLM's strengths and weaknesses.

Use Case Analysis: Identify specific use cases that align with your requirements. Evaluate previous successful implementations of LLMs in similar scenarios to gauge their effectiveness.

Experimentation: Conduct small-scale experiments using sample data or limited resources to test the performance of different LLMs in your chosen use cases.

Compare their outputs, efficiency, and adaptability to assess their fit.

Community Feedback: Engage with the developer community and seek feedback from experts who have experience with the LLMs you are considering.

Discuss their insights, challenges, and recommendations to gain a broader perspective.

Pilot Deployment: Select one or a few LLMs that appear to be the best fit based on your evaluation. Conduct a pilot deployment to evaluate real-world performance, scalability, and user feedback.

By following these steps and considering task requirements, accessibility, computational resources, and ethical considerations, you can make an informed decision when choosing the right LLM for your specific needs.

Remember, there is no "one-size-fits-all" LLM solution. Selecting the most suitable LLM involves careful consideration of various aspects to ensure it aligns with your objectives and provides the desired language processing capabilities.

Conclusion

In conclusion, the comparative analysis between Falcon LLM and other language models highlights the unique strengths and limitations of Falcon LLM. Its efficiency, focus on comprehension, and open-source nature make it a compelling choice for specific applications.

However, the smaller model size and specialized training data may limit its adaptability to diverse tasks. Ultimately, the selection of the most suitable language model depends on the specific needs, task requirements, accessibility preferences, computational resources, and ethical considerations of the user.

Taking these factors into account ensures informed decision-making for harnessing the power of large-scale language models in real-world applications.

The proliferation of LLMs is an exciting development in the field of natural language processing. With Falcon LLM setting a new standard, GPT-3 and Megatron-Turing NLG are other notable models in the market. By understanding their strengths and unique features, you can identify the best model for your needs and applications.

Frequently Asked Questions (FAQs)

What makes Falcon LLM different from other language models?

Falcon LLM stands out with its efficiency, focus on comprehension, and open-source nature, making it attractive for specific applications.

How does Falcon LLM compare in model size to other language models?

Falcon LLM has a smaller model size compared to models like GPT-3, with 680 million parameters. However, this smaller size comes with its own benefits, such as improved efficiency.

Can I customize Falcon LLM to better suit my specific needs?

As an open-source model, Falcon LLM allows developers to access its code and customize it according to their requirements, fostering collaboration and adaptability within the development community.

Are there specific use cases where Falcon LLM outperforms other language models?

Falcon LLM shines in use cases like customer support chatbots or sensitive data analysis where its comprehension, efficiency, and customization capabilities make it a strong contender. However, for broader language understanding or extensive and diverse datasets, other models may be more suitable.