What is Topic Modeling?

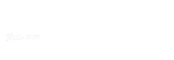

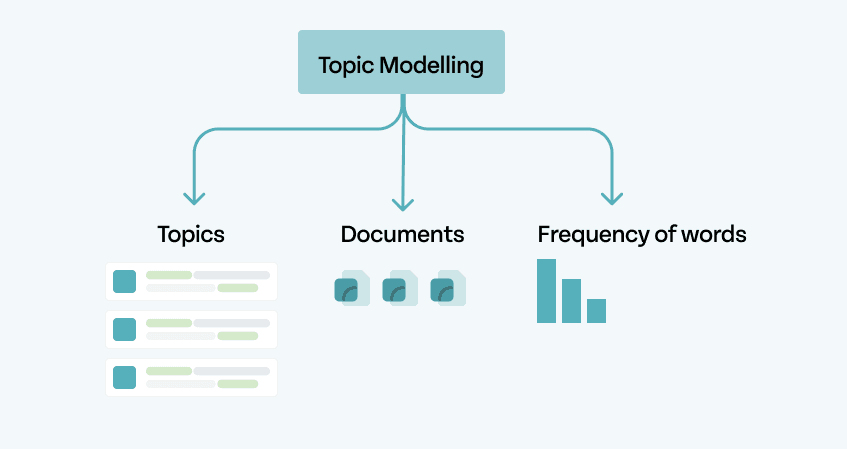

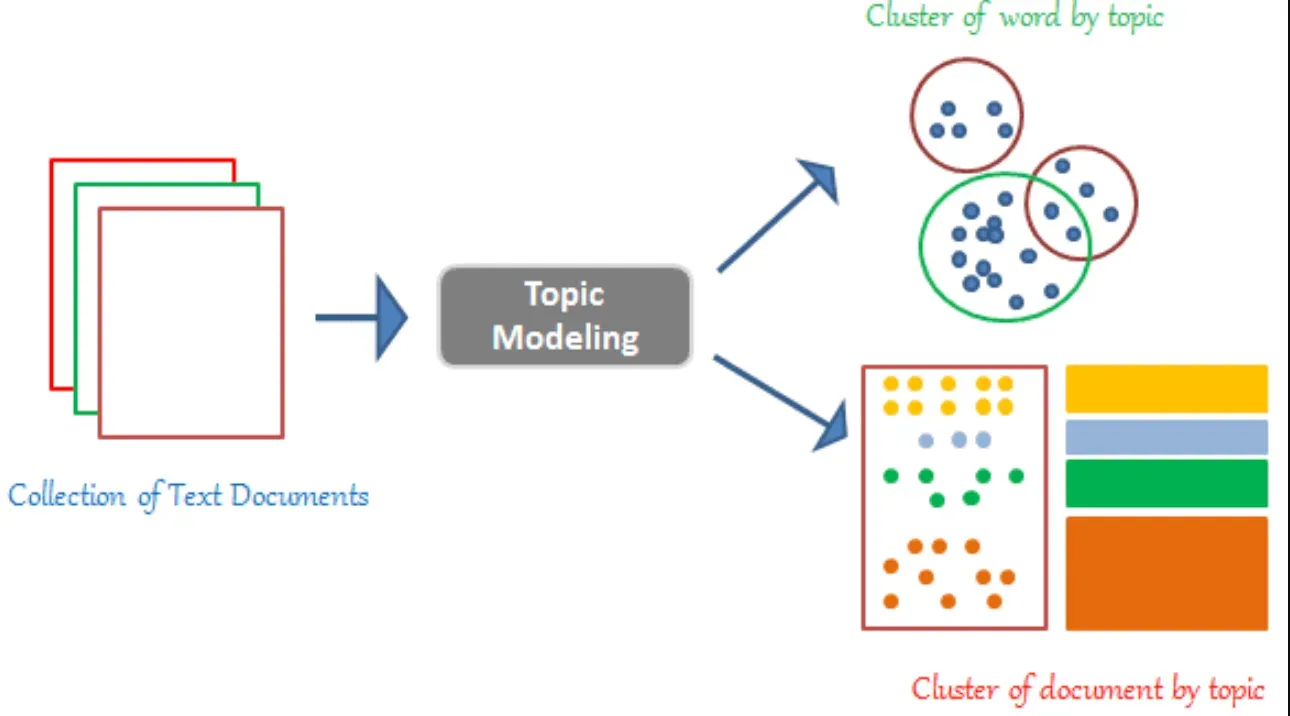

Topic Modeling is a technique used in natural language processing and machine learning to identify and extract topics or themes from a collection of documents.

It helps in discovering hidden patterns and structures within the text data, allowing us to gain insights and understand the underlying themes present in the documents.

Why is Topic Modeling Important?

Topic Modeling plays a crucial role in various domains, such as text mining, information retrieval, recommendation systems, and content analysis.

By automatically categorizing large amounts of text into meaningful topics, it simplifies the process of organizing and analyzing textual data, making it easier to extract valuable information and draw meaningful conclusions.

Techniques for Topic Modeling

Latent Dirichlet Allocation (LDA)

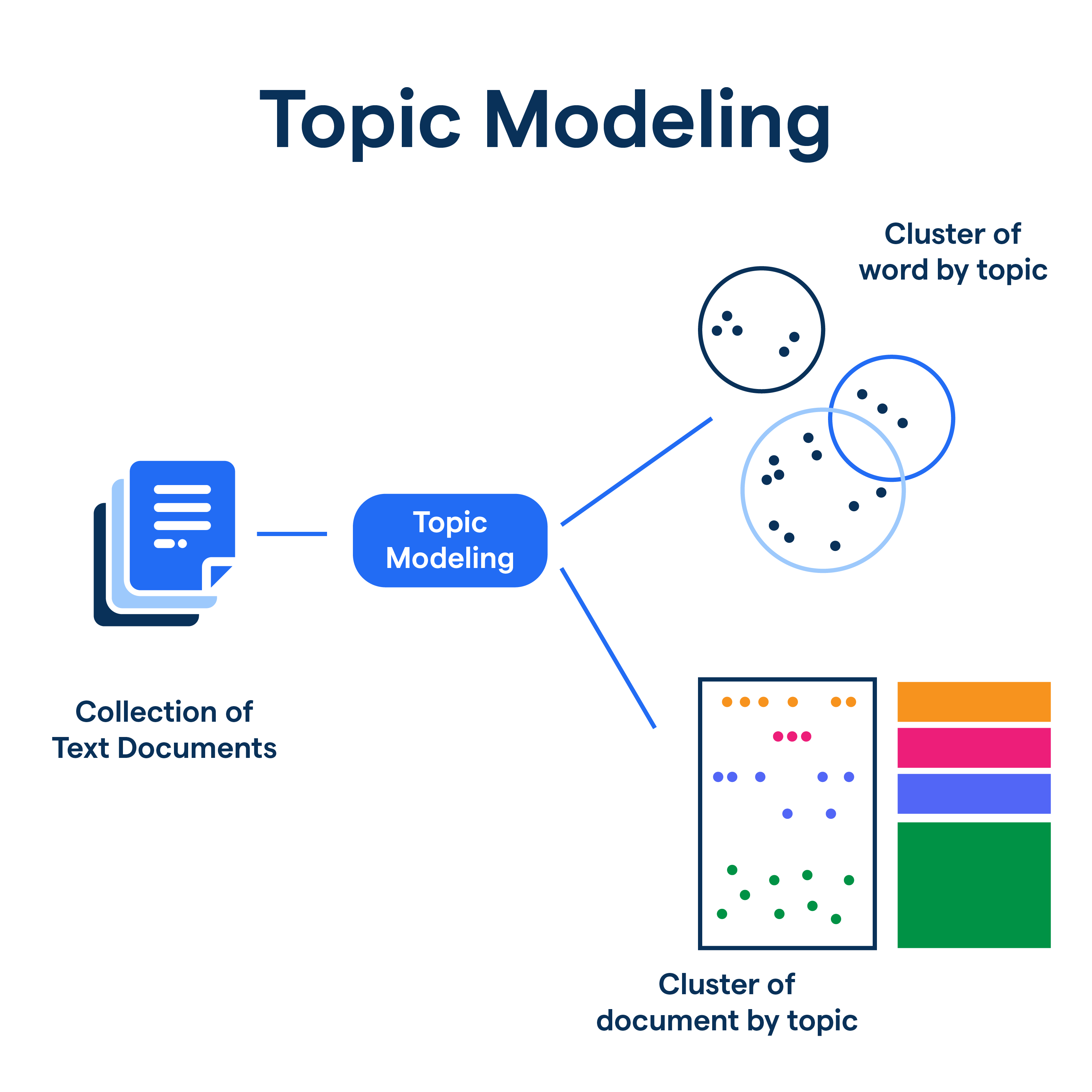

Latent Dirichlet Allocation (LDA) is a probabilistic generative model used for topic modeling. It assumes that each document is a mixture of multiple topics, and each topic is a distribution over words.

LDA iteratively assigns words to topics and topics to documents to find the best allocation that maximizes coherence. The output is a set of probability distributions representing the learned topics and their related words.

LDA is widely used in natural language processing and text mining to analyze and extract meaningful topics from large volumes of text data.

TextRank

TextRank is an algorithm used for extractive summarization and keyword extraction from text.

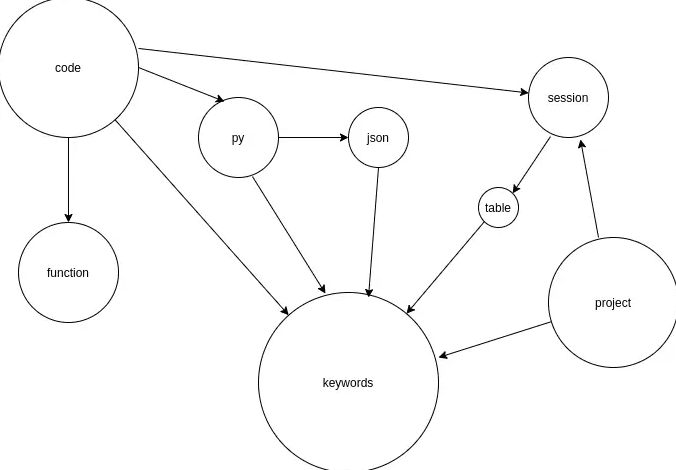

Inspired by Google's PageRank algorithm, TextRank assigns importance scores to words or sentences based on their co-occurrence patterns within the document.

It creates a graph representation of the text, with nodes as words or sentences and edges representing relationships between them.

By iteratively updating the importance scores, TextRank identifies the most important words or sentences that capture the key information or summarize the document effectively.

TextRank is widely used in natural language processing and information retrieval tasks to automatically generate summaries or extract important keywords from text.

Topic Modeling Toolkits

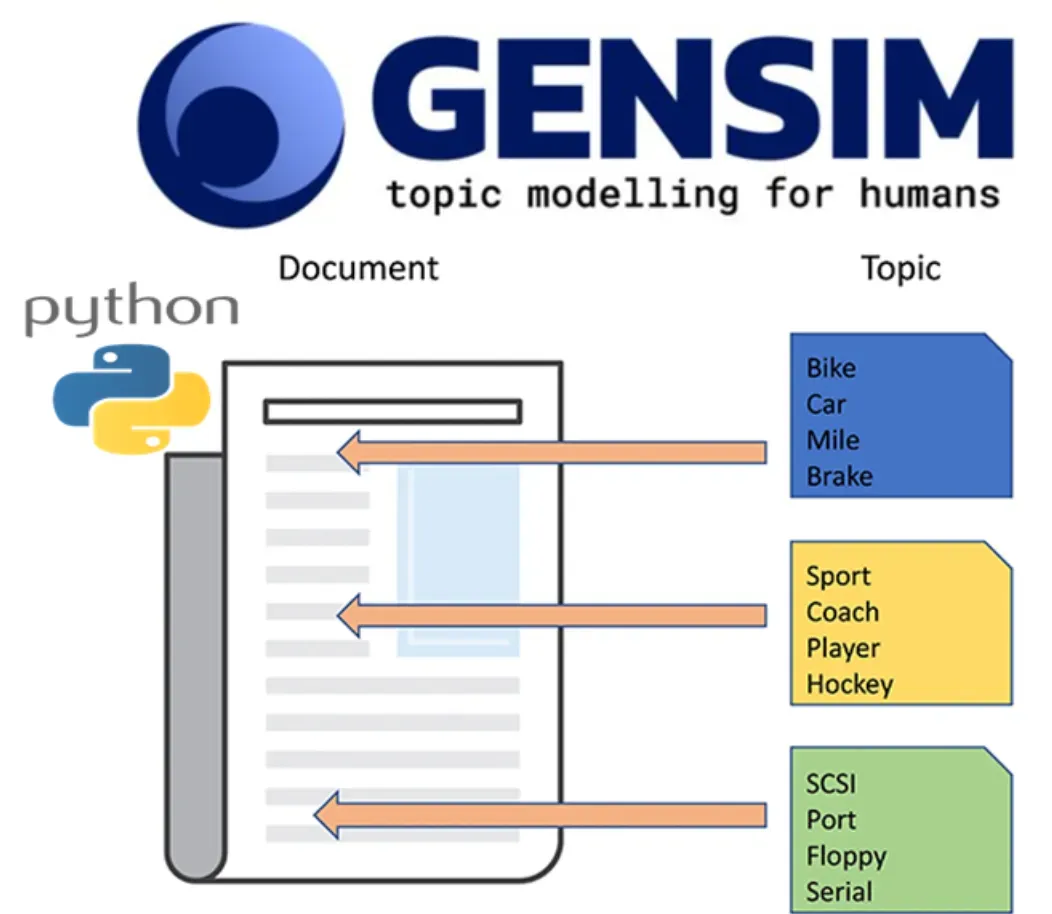

Gensim

Gensim is a popular Python library for topic modeling. It provides efficient implementations of various topic modeling algorithms, including LDA.

Gensim offers scalability, easy integration with other natural language processing tools, and a user-friendly API for topic modeling.

Stanford topic modeling toolbox (TMT)

TMT is a comprehensive topic modeling toolkit developed by Stanford University.

It offers a wide range of topic modeling algorithms, including LDA, and provides advanced functionalities for preprocessing, modeling, and evaluating topics. TMT is known for its robustness, performance, and extensive documentation.

MALLET

MALLET (Machine Learning for Language Toolkit) is a Java-based topic modeling toolkit that provides implementations of various topic modeling algorithms, including LDA.

It offers a command-line interface and can handle large-scale text data efficiently.

Applications of Topic Modeling

Customer Experience and Sentiment Analysis

Customer Experience and Sentiment Analysis can be incorporated into topic modeling to understand and analyze customer feedback or reviews.

By combining topic modeling techniques like Latent Dirichlet Allocation (LDA) with sentiment analysis, one can extract topics from customer feedback and determine the sentiment associated with each topic.

This approach helps identify the main themes within the feedback and assess the overall sentiment expressed by customers towards those topics.

It enables businesses to gain insights into customer preferences, identify areas of improvement, and make data-driven decisions to enhance customer experience.

By combining topic modeling and sentiment analysis, organizations can better understand and respond to customer needs and sentiments.

Market Research and Trend Analysis

Market research and trend analysis involve gathering and analyzing data to understand consumer behavior, preferences, and market trends.

Market research involves collecting and interpreting data on customers, competitors, and industry trends to make informed business decisions.

Trend analysis focuses on identifying patterns and predicting future market developments.

By analyzing market research data and conducting trend analysis, businesses can identify opportunities, understand customer needs, stay ahead of competitors, and make strategic decisions that align with market trends.

Benefits of Topic Modeling

Topic Discovery

Topic modeling helps in discovering hidden themes or topics within a large collection of documents.

It organizes and groups similar documents together based on their content, making it easier to explore and analyze a large amount of textual data.

Text Summarization

Topic modeling can be used to generate summaries of documents by extracting the most important topics or representative sentences from each topic.

This enables a quick understanding of the main themes and key points without having to read every single document.

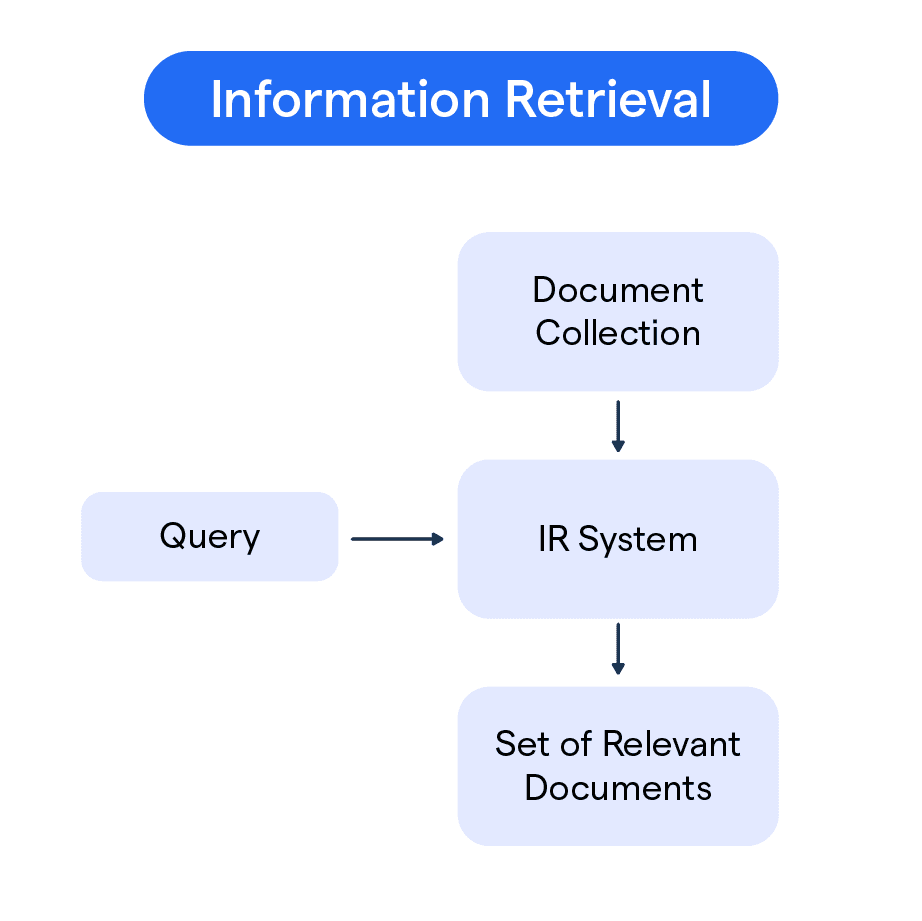

Information Retrieval

Topic modeling aids in improving the search and retrieval of information by assigning relevant topics to documents.

It allows users to find documents related to specific topics of interest, leading to more efficient and accurate information retrieval.

Content Recommendation

By identifying topics, topic modeling can also be leveraged to provide personalized content recommendations to users.

It enables content providers to suggest relevant articles, products, or services based on topics that match user preferences.

Data-Driven Decision Making

Topic modeling provides valuable insights into the underlying structure of text data. It helps in understanding the distribution, prevalence, and interrelationships of different topics.

These insights support data-driven decision-making by enabling businesses to identify trends, gain market intelligence, and optimize strategies and processes based on the topics that matter to their customers or domain.

Limitations of Topic Modeling

Ambiguity

Topic modeling algorithms may struggle with ambiguous terms or phrases that could be associated with multiple topics.

This can lead to misinterpretation or mislabeling of topics, reducing the accuracy of the results.

Overfitting

If the topic modeling algorithm is trained on a small or biased dataset, it may result in overfitting.

Overfitting occurs when the model becomes too specific to the training data and performs poorly when applied to new and unseen data.

Lack of Context

Topic modeling focuses solely on finding patterns within the text without considering the overall context.

This can result in topics that are not conceptually meaningful or lack interpretation when examined outside of the model.

Human Interpretation

The output of topic modeling is typically a list of topics with associated words.

The interpretation of these topics still heavily relies on human judgment, and different interpreters may have varying interpretations or understandings of the same topics.

Scalability

Some topic modeling algorithms may struggle to handle large-scale datasets efficiently.

As the size and complexity of the data increase, the computational requirements and processing time for topic modeling can become significant, making it less practical for certain applications.

How to Overcome Limitations in Topic Modeling?

Preprocessing and Feature Engineering

Preprocessing the text data, such as removing stop words, stemming, or lemmatizing, can help reduce ambiguity and increase the accuracy of topic modeling.

Additionally, performing feature engineering techniques like incorporating domain-specific knowledge or constructing custom language models can improve topic modeling results.

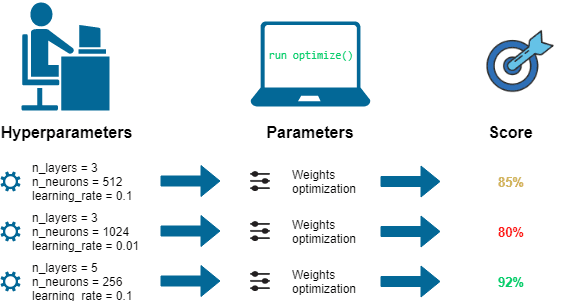

Regularization and Hyperparameter Tuning

Regularization techniques such as adding priors or applying smoothing can help combat overfitting in topic modeling algorithms.

Hyperparameter tunings, such as adjusting the number of topics or the alpha and beta values, can further optimize the model's performance on unseen data.

Incorporating Domain Knowledge

Combining topic modeling with domain knowledge, expertise, or human input can enhance the interpretability and contextuality of the topics generated.

Subject matter experts can assist in refining topic models, assigning labels, or validating the results to ensure their coherence and relevance.

Ensemble Approaches

Instead of relying on a single-topic modeling algorithm, utilizing ensemble approaches that combine multiple models or techniques can help overcome limitations.

Ensemble methods, such as taking a weighted average of results or using a voting system, can improve the robustness and reliability of topic modeling outcomes.

Scalability and Optimization

To address scalability issues, implementing distributed computing frameworks or optimized algorithms specifically designed for large-scale topic modeling can be beneficial.

Techniques like parallel processing, incremental learning, or utilizing cloud-based services can improve the scalability and efficiency of topic modeling on big datasets.

Frequently Asked Questions (FAQs)

Can Topic Modeling be applied to any type of text data?

Yes, Topic Modeling is versatile and can be applied to various types of text data, including news articles, social media posts, academic papers, and customer reviews.

How do I choose the number of topics for my model?

Determining the ideal number of topics often requires iterative experimentation. Methods like perplexity, coherence, and human judgment can guide this decision.

Are there user-friendly tools and libraries for Topic Modeling?

Yes, there are user-friendly libraries like Gensim and scikit-learn in Python that make it relatively easy to perform Topic Modeling. These libraries provide pre-built functions for common tasks.

What are the limitations of Topic Modeling?

Topic Modeling may not work well with very short texts, and the interpretability of topics can be challenging, particularly in cases where the documents are diverse in content.

How does Topic Modeling benefit content creators and marketers?

Content creators and marketers can use Topic Modeling to gain insights into audience interests, trends, and the most engaging topics, helping them tailor their content strategy to their target audience.