WHAT is BERTology?

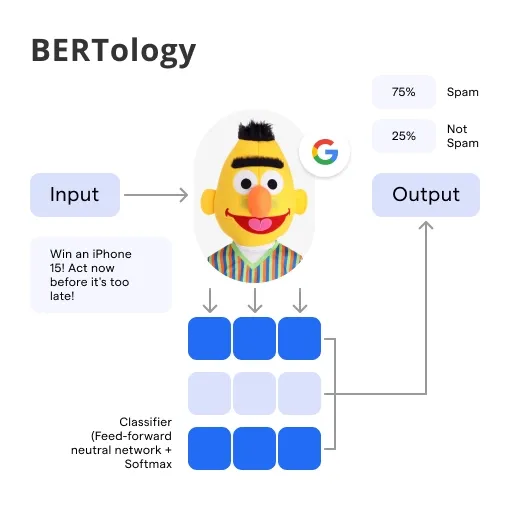

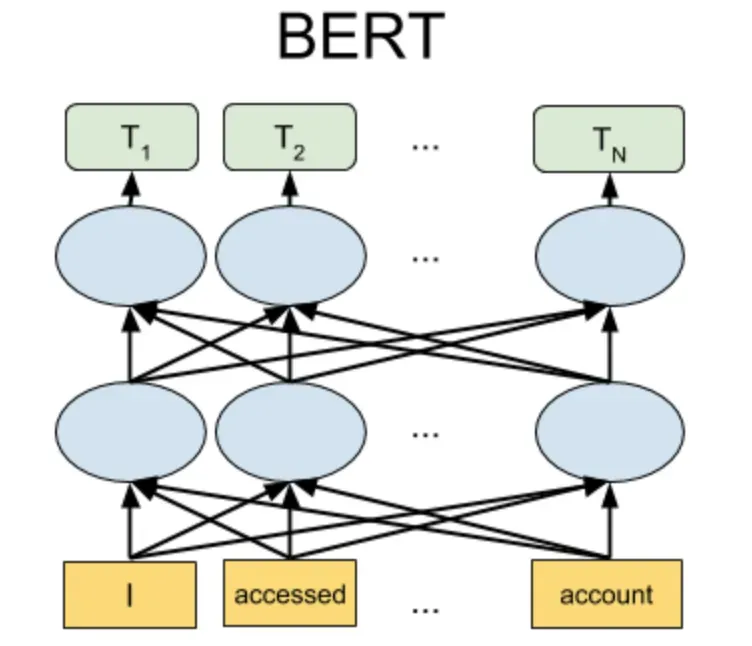

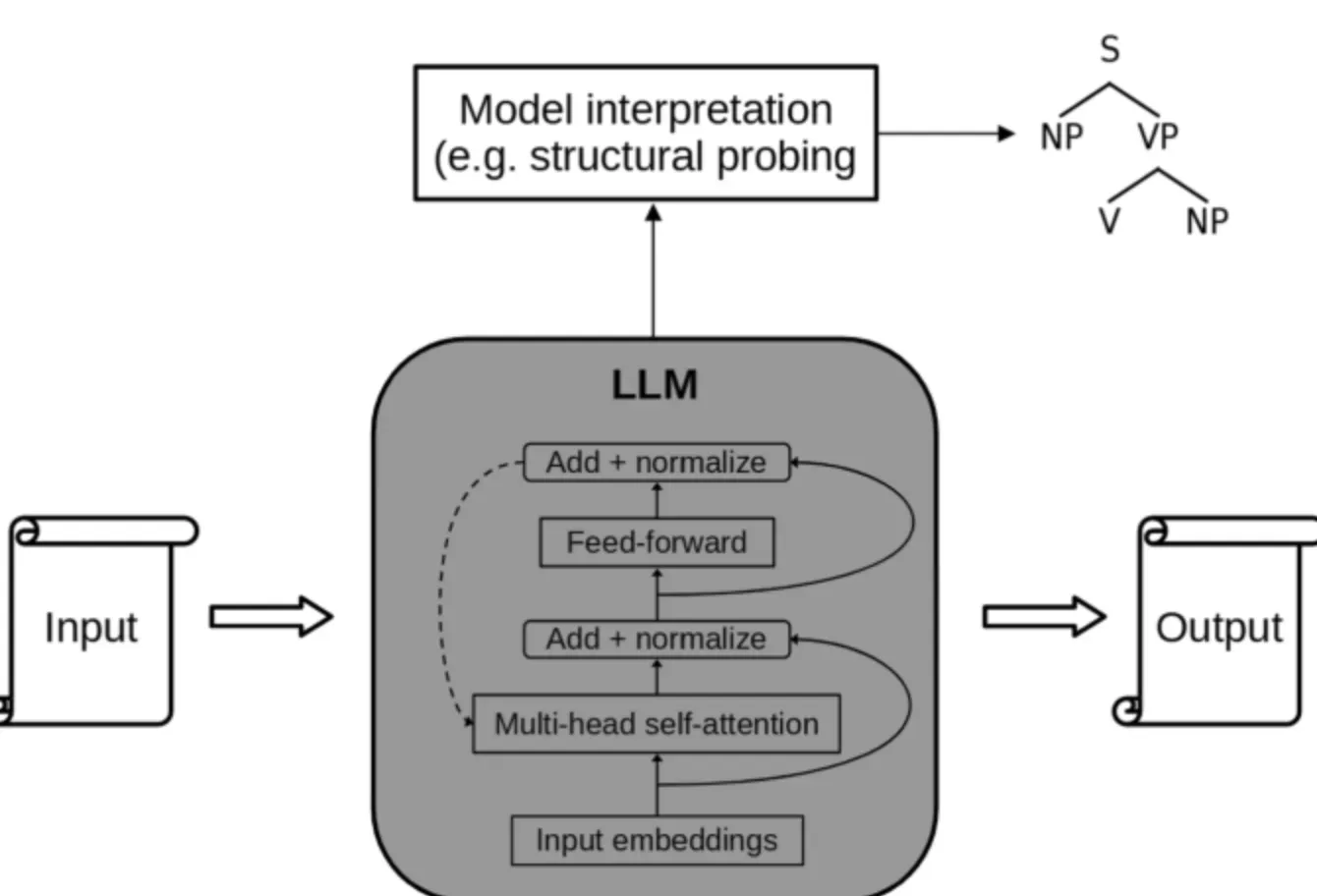

BERTology is a branch of study focusing on the understanding and further development of Google's BERT (Bidirectional Encoder Representations from Transformers) model and its numerous variations.

It encompasses a wide array of research areas, including Natural Language Processing (NLP), machine learning, linguistics, and more.

BERT has revolutionized AI and NLP by enabling computers to understand human language more effectively. It leverages the concept of 'transformers' and bidirectional training to understand the meaning and intent behind words in a sentence.

BERTology fundamentally enables us to fine-tune BERT models to a specific language understanding task. This practice advances various technology fields, from search engines to chatbots, voice assistants, and automatic translators.

By unlocking advanced language understanding, BERTology helps create more efficient and intuitive AI services, leading to a more personalized and human-like digital experience.

WHY BERTology Matters?

BERTology is not merely a buzzword; it's a critical domain with considerable implications in our digital age. Let's understand why it matters.

Building Smarter AI Systems

BERTology is instrumental in creating AI systems that understand and interact with human language. Systems trained using BERT have a deeper and wider language understanding, paving the way for a more advanced AI landscape.

Overcoming Language Barriers

BERTology has contributed significantly to breaking down language barriers. With BERTological advancements, seamless automatic translation and multilingual models have become a reality, fostering global communication.

Optimizing User Experience

BERTology plays a critical role in optimizing user experience in the digital realm. From accurate search results to efficient chatbots, the impact of BERTology is wide and diverse.

Fueling Digital Innovation

Lastly, BERTology is a powerful fuel for digital innovation. Combined with other technological advances, it brings next-level solutions and opens new avenues for future growth and innovation.

WHO benefits from BERTology?

BERTology isn't confined to just researchers and data scientists. Its benefits extend to a wide range of users and industries.

Consumers

Everyday internet users reap the benefits of BERTology each time they use a search engine, interact with a voice assistant, or engage with a smart chatbot.

Businesses and Organizations

Companies and organizations can leverage BERTology to provide superior digital services, refine customer conversation analysis, and enhance business intelligence.

AI Developers and Researchers

AI developers and researchers benefit significantly from BERTology as it provides a robust foundation for building more advanced models and applications.

SEO Specialists

With the adoption of BERT by Google, SEO specialists now need to understand BERTology to optimize content and websites more effectively.

WHEN did BERTology come into Existence?

To appreciate BERTology, it's beneficial to understand its historical context and evolution.

PRE-BERT Era

Before BERT, other models like LSTM (Long Short Term Memory) and ELMo were used in natural language processing but had certain limitations.

The Advent of BERT

BERT was introduced by researchers at Google AI Language in 2018, marking a milestone in NLP.

The Rise of BERTology

The introduction of BERT sparked intense research and model variations, leading to the genesis of BERTology - the study of everything BERT.

BERTology Today

BERTology has significantly evolved today, with researchers tirelessly working to unlock more potential and develop more advanced BERT models.

WHERE can we Apply BERTology?

The application of BERTology isn't limited to a single space. Let's explore some potential avenues for its use:

Search Engines

Search engines like Google have utilized BERT to improve search quality, ensuring better understanding and matching of search queries.

Textual Analysis

BERTology enables in-depth textual analysis, leading to more accurate sentiment analysis, text classification, and more.

Voice Assistants

BERTology can be used to improve the performance of voice assistants like Siri, Alexa, and Google Home, leading to higher-quality user interaction.

AI Chatbots

AI chatbots can greatly benefit from BERTology for better comprehension of human language and for delivering appropriate responses.

HOW to Understand and Implement BERTology?

BERTology is a vast field that requires specific knowledge and an understanding of how to implement it in various applications.

Starting with the Basics

Understand the basics of NLP, AI, and the theory behind BERT before diving into BERTology.

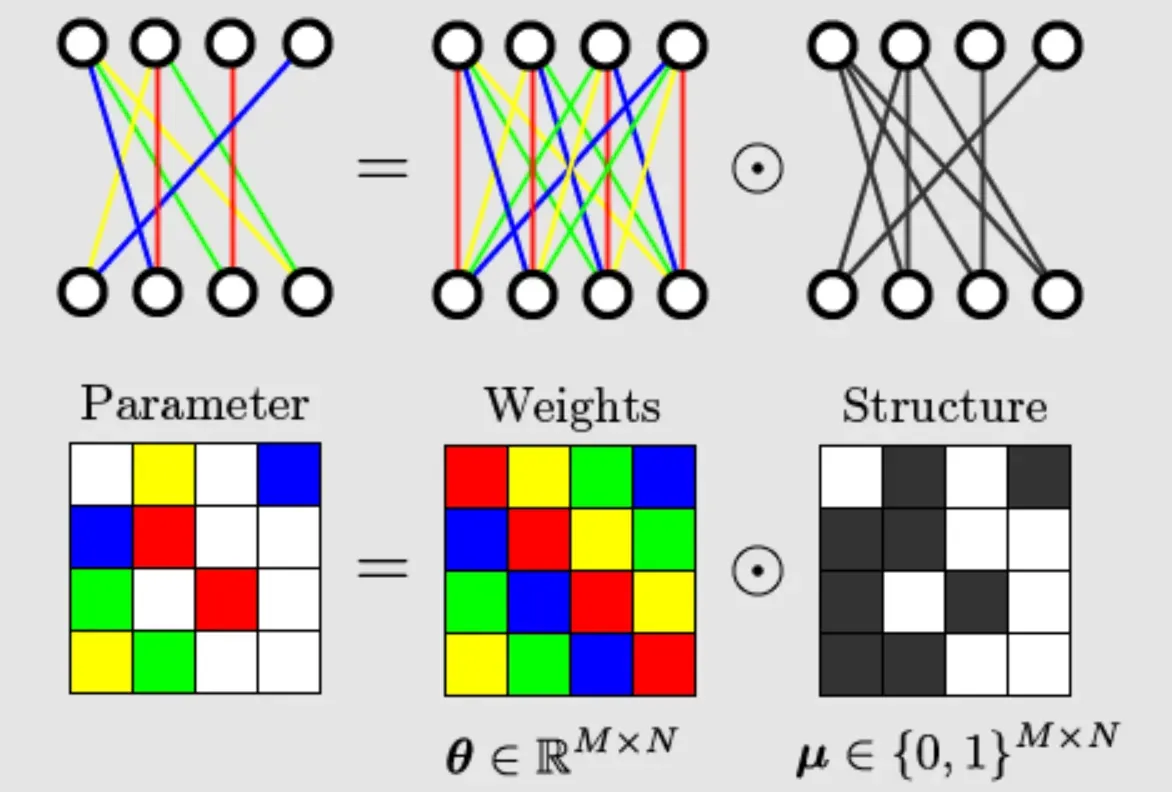

Understanding BERT

Get a grip on the BERT paper, the algorithms used, and its architecture. Online tutorials, forums, and scholarly articles are good starting points.

Using Pre-Trained BERT Models

Leverage pre-trained BERT models available in TensorFlow and PyTorch for hands-on experience.

Fine-Tuning BERT Models

Adeptly learn how to fine-tune the BERT models to suit your specific language understanding task.

Best Practices For BERTology

Let's delve into the most essential best practices you should follow when working with BERTology.

Understanding the Essence of BERTology

Before diving into complex implementations, ensure a solid understanding of BERT's structure, purpose, and work. It's best to thoroughly review the original BERT paper and related scholarly articles.

Choosing the Right BERT Model

Recognize the fact that one size doesn't fit all. Choose a model that's suitable for your specific NLP task, dataset size, and computing resources. The original BERT model, BERT Base, and BERT Large are all good starting points.

Leveraging Pre-Trained BERT Models

To save time and resources, consider using pre-trained BERT models. They've been trained on vast datasets and can be fine-tuned on your specific task with a much smaller dataset.

Fine-Tuning BERT Thoughtfully

In most applications, it's beneficial to fine-tune the complete BERT model on your particular task rather than freezing layers. Avoid overfitting by using a smaller learning rate and early stopping.

Balancing between Speed and Accuracy

BERT models, especially large ones, can be resource-intensive. You'll need to strike a balance between speed and performance, which could entail using model variations like DistilBERT or MobileBERT.

Optimizing Input Length for BERT

BERT uses fixed-length inputs, so it's optimal to combine shorter sentences and clip longer ones intelligently, retaining the most meaningful information.

Creating Efficient Training Cycles

Training BERT models require significant computational power. Implement efficient training cycles using techniques like gradient accumulation to optimize memory usage.

Staying Updated with BERTology Advancements

BERTology is a dynamic field with ongoing research and advancements. Stay updated with the latest findings, model variations, and tools to make the most out of BERTology. Consider joining AI and data science communities, reading AI publications, or attending relevant webinars and conferences.

Frequently Asked Questions (FAQs)

What is BERTology?

BERTology is the study and analysis of BERT, a pre-trained language model developed by Google, to understand its architecture, mechanisms, and improve its performance.

How does BERTology contribute to NLP tasks?

BERTology explores BERT's attention mechanisms, training techniques, and enhances language understanding tasks using BERT's pre-trained representations.

What are the objectives of BERTology research?

The objectives of BERTology are to improve BERT's performance, gain insights into language understanding, and develop innovative approaches for NLP tasks.

What insights can BERTology provide?

BERTology research provides understanding of BERT's capabilities, limitations, and how to extract meaningful representations from text data using pre-training techniques.

How does BERTology impact NLP advancements?

By studying BERT, BERTology leads to advancements in NLP tasks by developing more effective models, extracting better contextualized word embeddings, and improving language understanding.