“Wait… let me ChatGPT that.” It’s become a reflex. From random doubts to serious questions, we’re turning to AI before anything else.

And now, that habit is entering healthcare.

With modern healthcare using AI to draft notes and simplify medical information, ChatGPT has become the go-to tool for quick answers and everyday doubts.

But here’s the real question: should it be trusted with health decisions?

In this guide, you’ll learn about ChatGPT in healthcare in clear, practical terms. It covers real use cases, key benefits, major limitations, and safe ways to use it without risk.

What ChatGPT Means in Healthcare Context: A Simple Overview

ChatGPT in healthcare refers to using a general-purpose AI language model to support everyday medical and operational tasks.

The model is adapted for healthcare workflows like documentation, research summaries, and patient communication. It helps process information faster and present it clearly.

There are two main ways it is used:

Clear Boundary: ChatGPT is not a clinical system. It does NOT diagnose, treat, or replace medical judgment.

However, users often confuse newer, health-focused experiences like ChatGPT Health for clinical-grade medical tools. Here’s how to understand what it actually is.

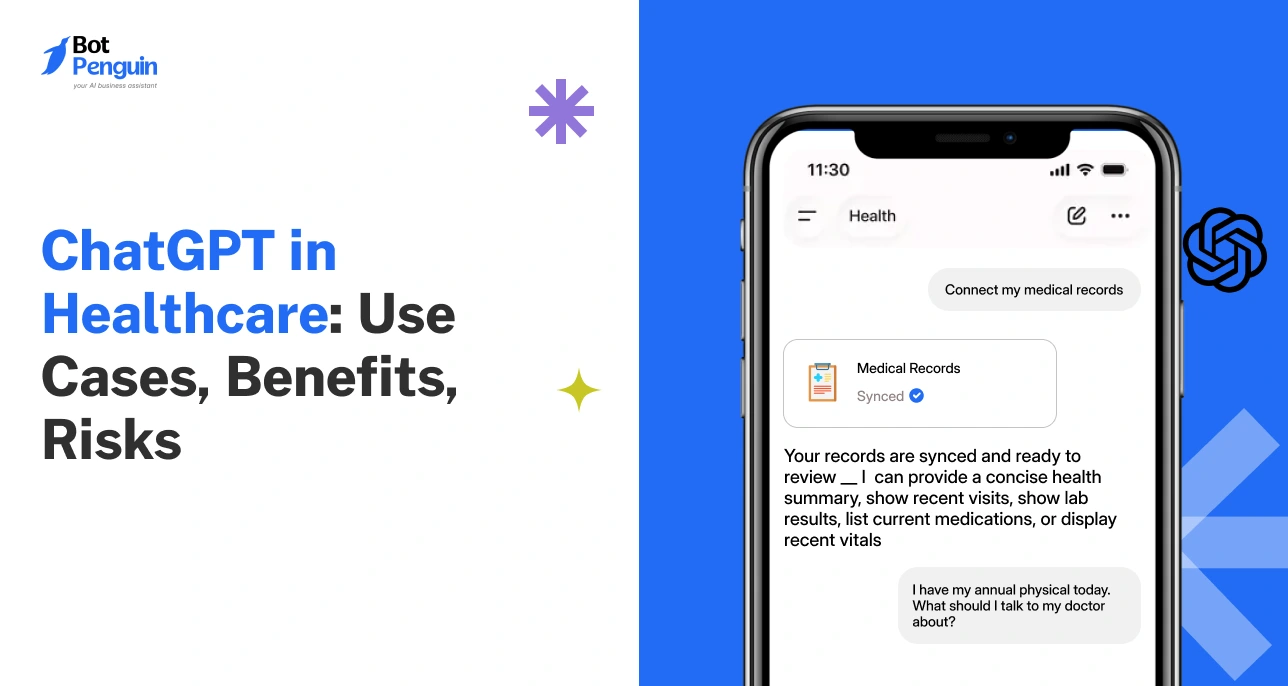

What is ChatGPT Health?

ChatGPT Health is OpenAI’s dedicated health experience, built with layered encryption, isolated health conversations, and direct integrations with medical records and wellness apps like Apple Health, Function, and MyFitnessPal.

Developed alongside 260+ physicians across 60 countries and evaluated using OpenAI’s own HealthBench framework, it helps users understand lab results, prepare for appointments, and navigate health information, without replacing clinical care.

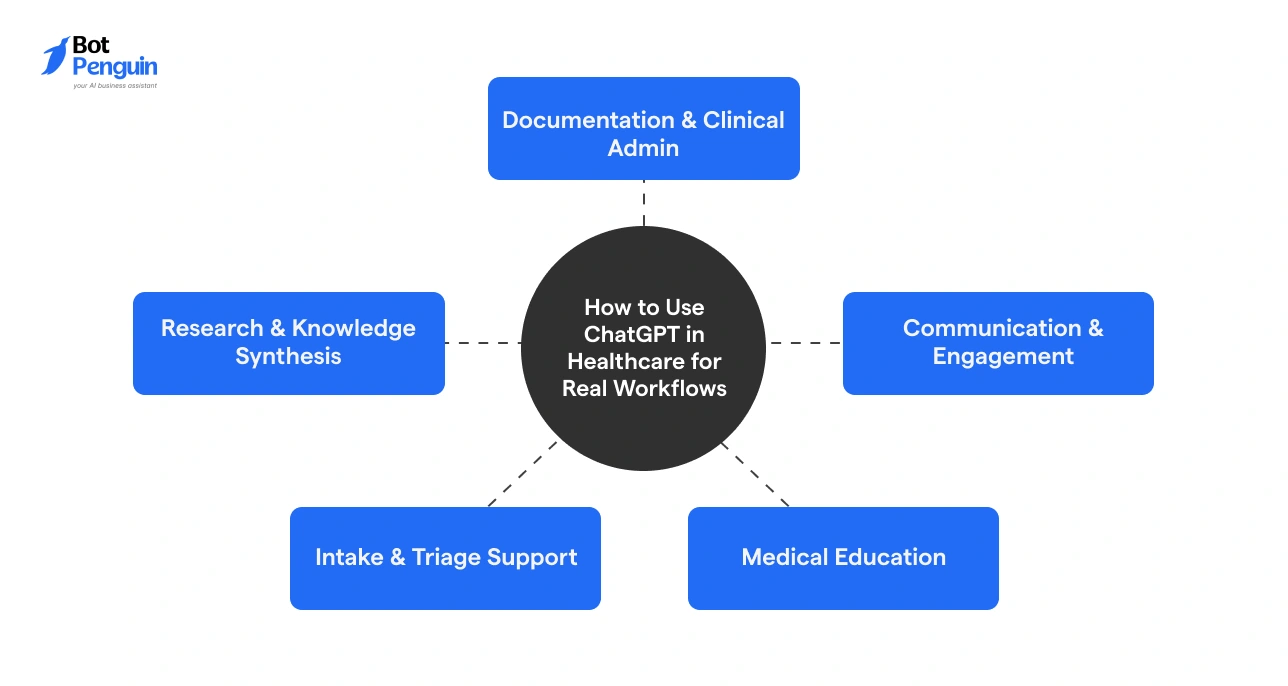

How to Use ChatGPT in Healthcare for Real Workflows

ChatGPT applications in healthcare are most effective when used at the task level, not as a broad “AI tool”, but as a workflow assistant.

Here are some clever ways you can use it across daily tasks:

Documentation & Clinical Admin

The American Health Association (AHA) states that physicians spend nearly 49% of their time on EHRs and administrative desk work, highlighting how documentation dominates their daily workload.

ChatGPT helps by enabling clinicians to draft SOAP notes, discharge summaries, and prior authorization requests. Instead of writing from scratch, they refine AI-generated drafts, lowering administrative burden.

Research & Knowledge Synthesis

Medical professionals use it to summarize journals, compare treatment approaches, and structure research drafts.

For instance, a researcher can input multiple studies and get a concise comparison of outcomes, saving hours of manual review.

(Add an image here.)

Communication & Engagement

ChatGPT helps simplify complex medical language into patient-friendly explanations.

With a prompt as simple as, “Tell me like I am a child...”, you can break down diagnoses, treatments, or reports into clear, easy-to-understand terms.

It generates FAQs, follow-up messages, and even translates instructions into multiple languages.

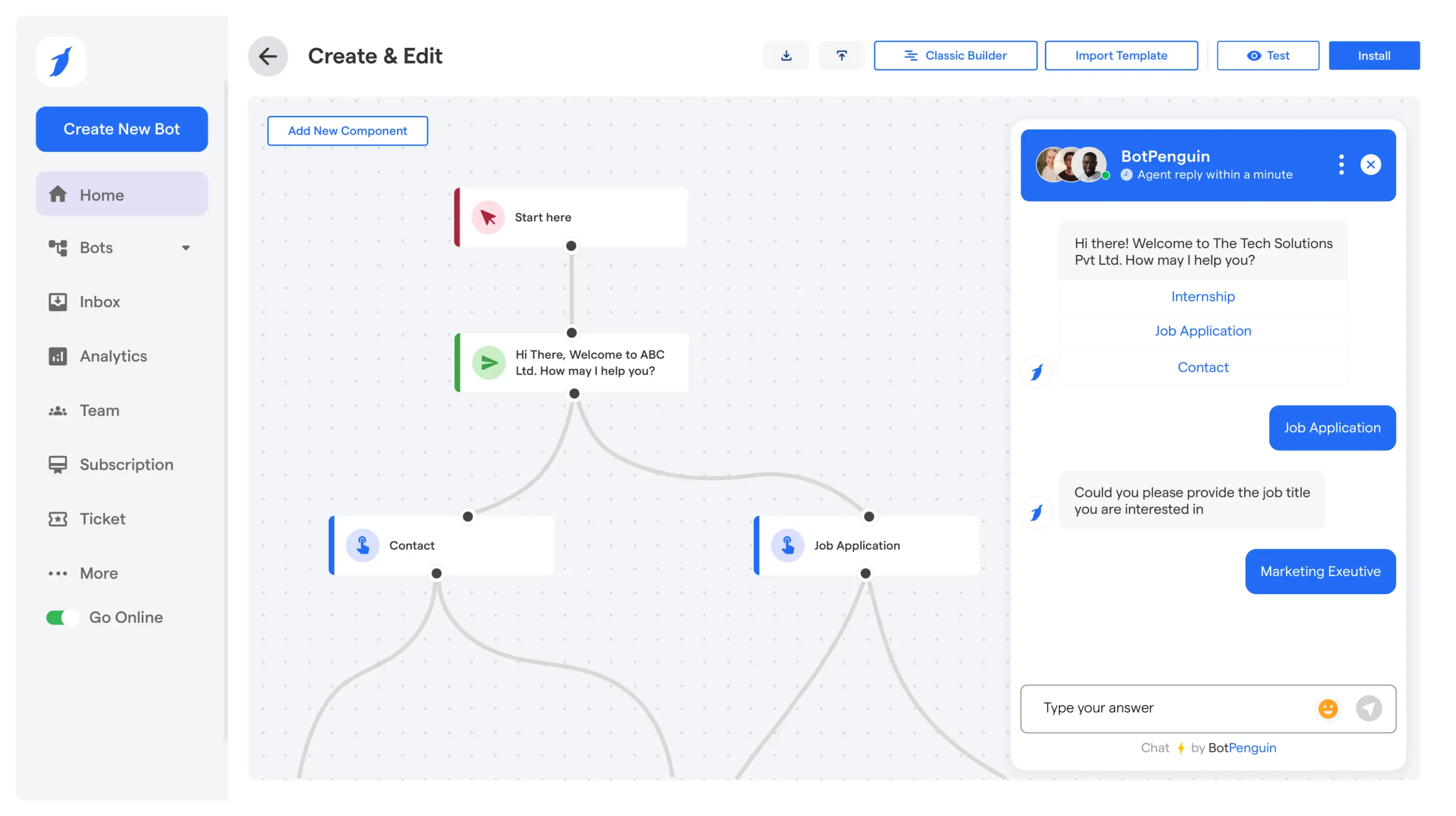

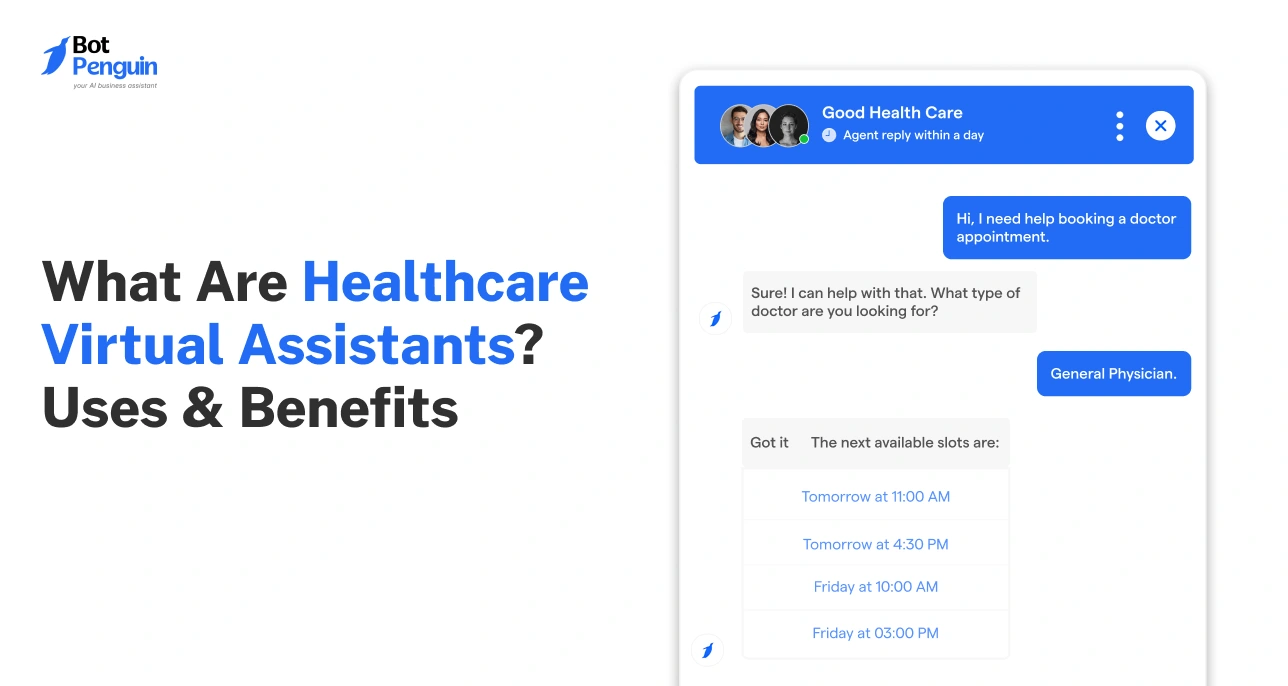

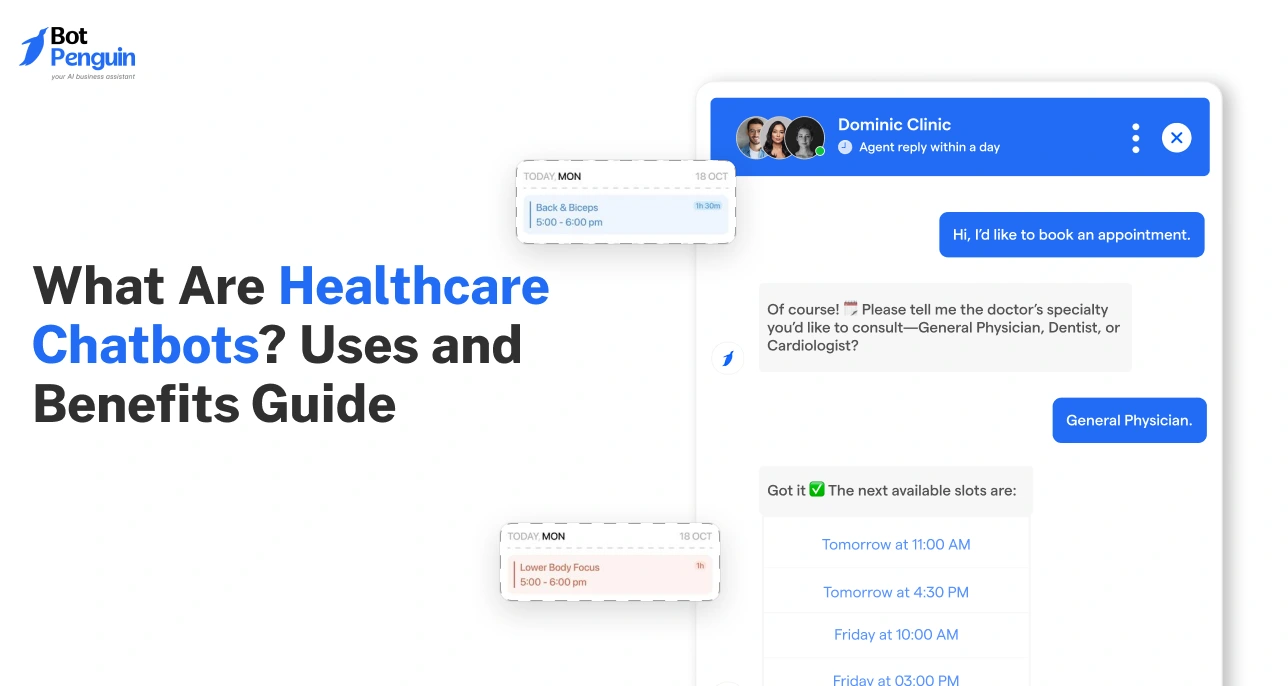

If you’re a healthcare provider looking to take this automation further, platforms like BotPenguin can build on ChatGPT’s capabilities, offering HIPAA-compliant, AI-driven chatbots that streamline appointment scheduling, reminders, and patient inquiries for a patient-centric experience.

Intake & Triage Support

ChatGPT can organize symptoms, structure patient history, and assist in intake forms.

Patients can describe issues in plain language, and the LLM formats it into structured inputs for clinicians, without making diagnoses.

Medical Education

Students and professionals use it for concept explanations, case simulations, and exam prep.

It acts like an on-demand tutor, breaking down complex topics like “renin-angiotensin-aldosterone system” or “mechanism of action of beta-blockers” into simple steps, reducing study time for aspirational medical professionals.

From the clinic to the classroom, ChatGPT fits where the workflow demands it, not as a replacement, but as the assistant that never clocks out.

Do Doctors Use ChatGPT? Here’s the Reality

Yes, but in a limited and controlled way.

Many clinicians use it to speed up routine work like writing notes, summarizing studies, and drafting patient messages. It is valued for saving time and improving clarity in everyday tasks.

However, it is NOT trusted for diagnosis, treatment decisions, or unsupervised clinical judgment.

Its use depends heavily on hospital policies, data privacy rules, and internal approvals.

ChatGPT Healthcare Use Cases by Role (Who Uses It for What)

Unlike general workflows, this section shows how different users apply it in real scenarios.

ChatGPT use cases in healthcare change based on daily responsibilities: clinical, operational, or personal.

Points to Remember:

- Usage depends on role and workflow, not a blanket approach.

- Most applications center around communication, documentation, and clarity.

- It supports tasks but never replaces expertise or clinical decision-making.

- Patients should use it to understand, not to diagnose or self-treat.

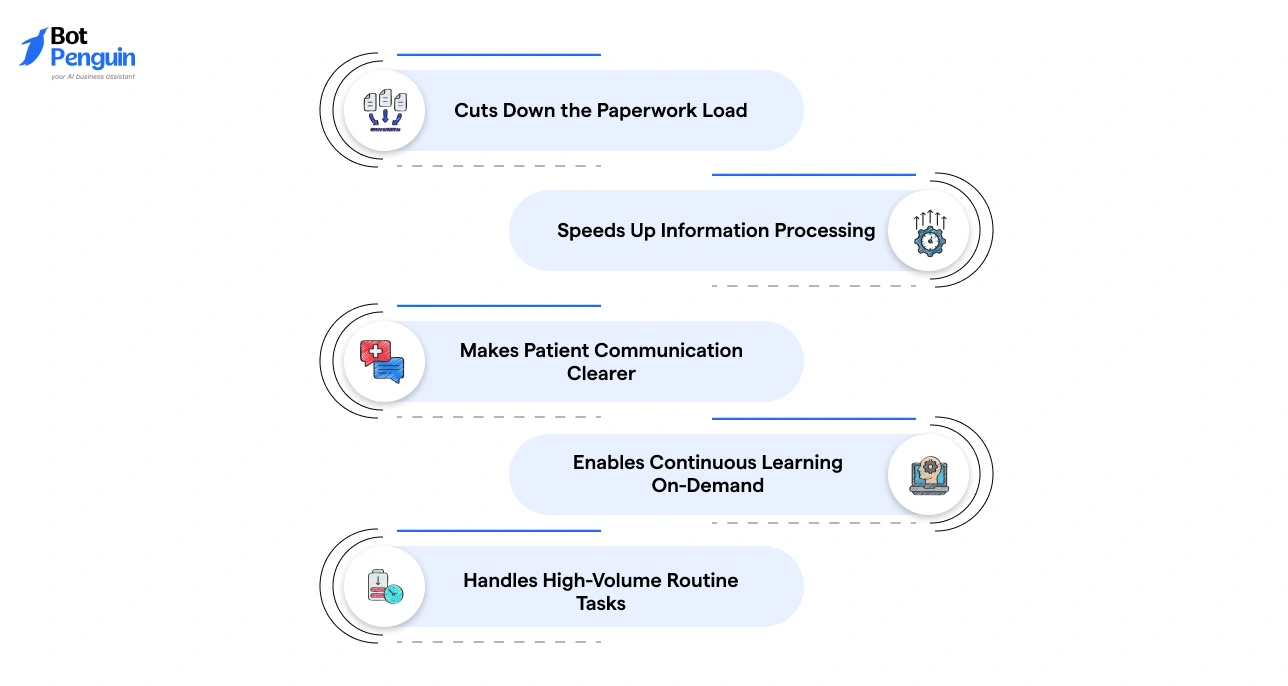

Real Benefits of ChatGPT in Healthcare (Beyond the Hype)

ChatGPT's real value in healthcare is not in replacing systems; it is in removing friction from the ones already in place.

Here is where it genuinely delivers:

- Cuts Down the Paperwork Load: ChatGPT lowers administrative burden by automatically drafting operational notes, discharge summaries, and authorization requests.

- Speeds Up Information Processing: It quickly summarizes medical literature, reports, and patient data, improving decision support workflows.

- Makes Patient Communication Clearer: By simplifying complex medical terms into easy language, it helps patients better understand diagnoses, instructions, and next steps.

- Enables Continuous Learning On-Demand: Medical professionals use it to refresh concepts, explore cases, and stay updated. It acts as a quick learning aid during busy schedules.

- Handles High-Volume Routine Tasks: From FAQs to follow-ups, ChatGPT scales repetitive interactions efficiently. This supports teams managing large patient volumes without compromising response quality.

These benefits compound when ChatGPT is used consistently within defined workflows.

ChatGPT vs Healthcare Chatbots vs AI Agents: Understanding the Differences

ChatGPT is only one part of a larger AI landscape.

At the same time, not all AI tools in healthcare work the same way. ChatGPT, healthcare chatbots, and AI agents (the major AI categories in healthcare) are designed to serve different roles and are not interchangeable.

The table below highlights these distinctions:

Key Differences:

- Flexibility vs Structure: ChatGPT is flexible and handles open-ended tasks. Chatbots follow fixed flows. AI agents go a step further by executing actions.

- Control vs Autonomy: ChatGPT and chatbots assist users. AI agents can act independently within defined limits.

- Use Case Fit: Use ChatGPT for content and communication, chatbots for predictable interactions, and AI agents for workflow automation.

In short, ChatGPT in the healthcare industry supports thinking and communication, chatbots guide interactions, and AI agents drive execution.

Platforms like BotPenguin offer specialized healthcare chatbots that integrate seamlessly into your workflow. By focusing on automated, task-specific communication, BotPenguin helps streamline appointment scheduling, patient reminders, and routine follow-ups.

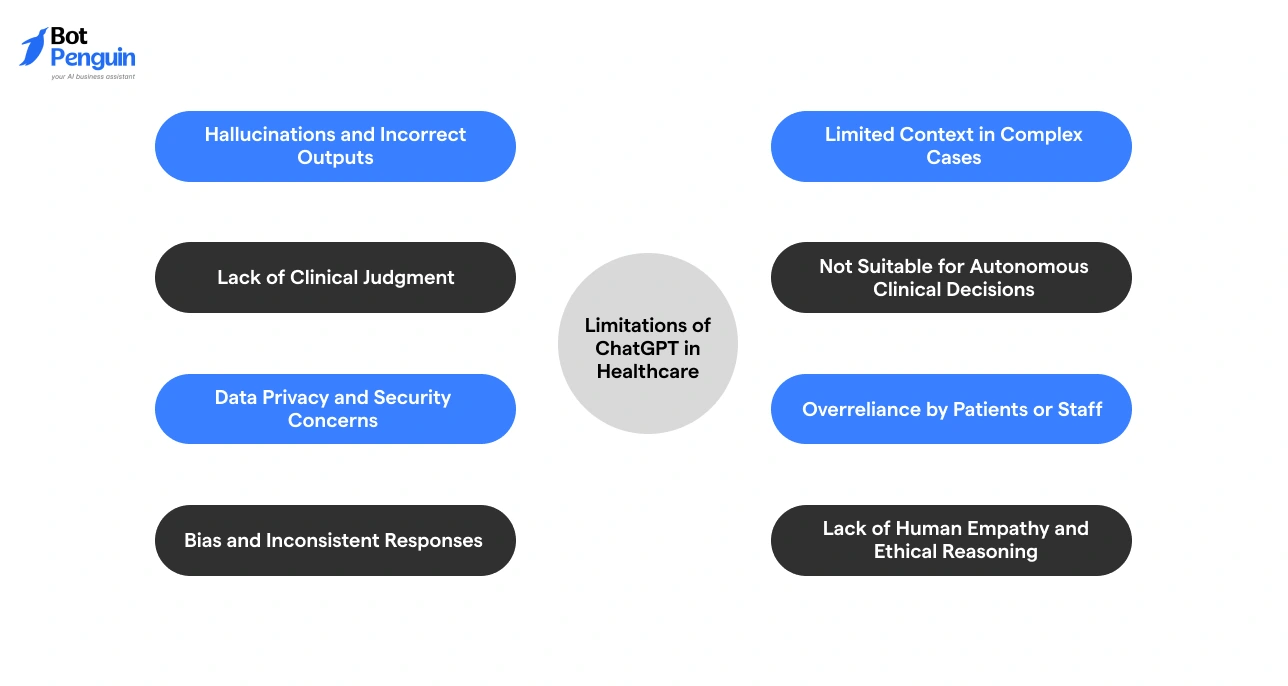

Limitations of ChatGPT in Healthcare & How to Overcome Them

In healthcare, unchecked ChatGPT use can cause real harm. Understanding where it fails is just as important as knowing where it works.

Hallucinations and Incorrect Outputs

ChatGPT can generate confident but incorrect or fabricated information, like citing a non-existent drug dosage or misquoting clinical guidelines.

Always verify outputs with trusted medical sources before using them in any workflow.

Lack of Clinical Judgment

It cannot apply real-world clinical reasoning or context like a trained professional.

Use it only as a support tool, not for making medical decisions.

Data Privacy and Security Concerns

Sharing sensitive patient data can lead to compliance and privacy risks. For US healthcare professionals, non-compliance with HIPAA invites serious legal and financial penalties.

It’s critical to avoid inputting identifiable information unless using secure, approved systems.

Bias and Inconsistent Responses

Outputs may reflect biases or vary in accuracy depending on prompts.

Cross-check responses and standardize usage with clear guidelines.

Limited Context in Complex Cases

It may miss nuances in multi-layered or critical medical scenarios, like a patient with overlapping conditions, conflicting medications, and unclear history.

It’s crucial to rely on human expertise for complex case evaluation.

Not Suitable for Autonomous Clinical Decisions

ChatGPT cannot independently diagnose or treat patients. Never trust it for final treatment calls or emergency clinical decisions.

Keep human oversight mandatory in all clinical workflows.

Overreliance by Patients or Staff

Users may trust outputs without validation. It’s because AI responses sound authoritative, even when they are wrong.

Encourage critical review and second opinions for all important information.

Lack of Human Empathy and Ethical Reasoning

It cannot replace emotional understanding or ethical judgment in care, like end-of-life conversations, mental health support, or grief counseling.

Ensure human interaction remains central to patient care.

Every ChatGPT limitation above has a workaround, and that workaround is mostly a trained professional in the loop.

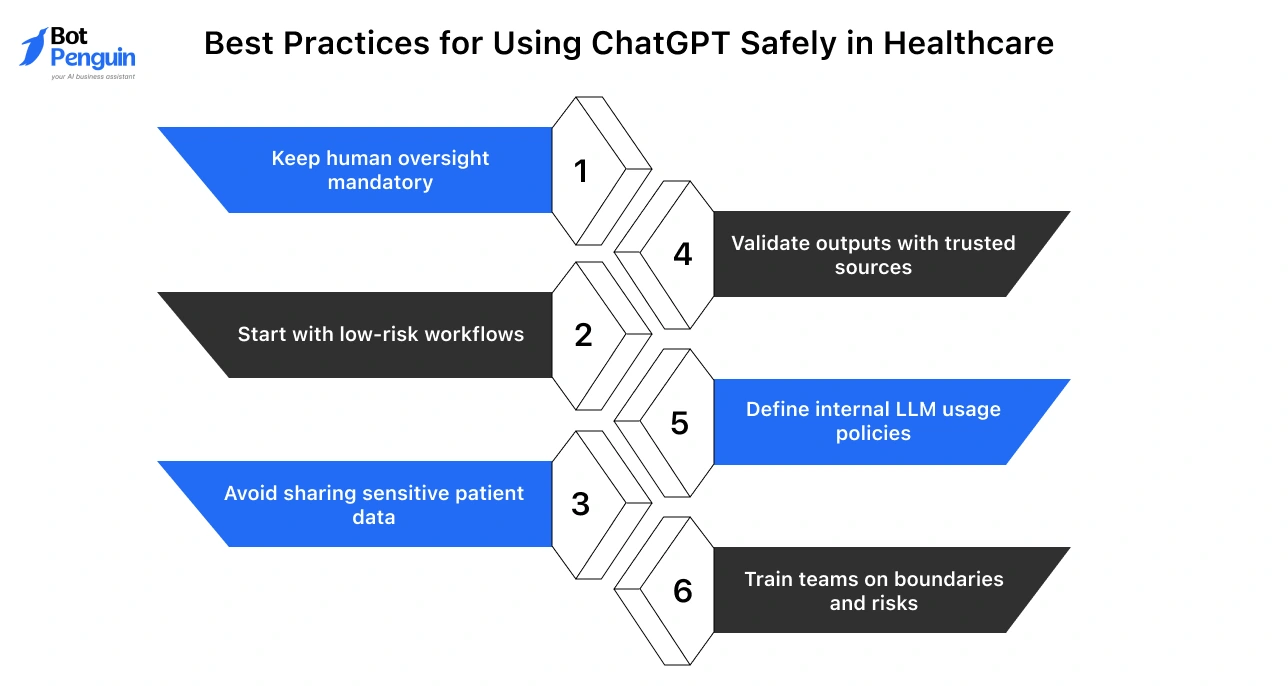

Best Practices for Using ChatGPT Safely in Healthcare

Using ChatGPT in healthcare requires clear guardrails. The focus should be on safe, controlled, and responsible usage, not just speed or convenience.

- Keep human oversight mandatory: Always review and validate outputs before using them in any clinical or patient-facing context.

- Start with low-risk workflows: Begin with admin tasks like documentation, FAQs, and summaries before moving to more sensitive use cases.

- Avoid sharing sensitive patient data: Do not input identifiable or confidential information unless using secure, compliant systems.

- Validate outputs with trusted sources: Cross-check responses with clinical guidelines, verified databases, or expert review.

- Define internal LLM usage policies: Establish clear rules on where, when, and how ChatGPT can be used within the organization.

- Train teams on boundaries and risks: Ensure staff understand limitations, risks, and appropriate use cases.

Safe adoption inevitably depends on combining AI efficiency with human judgment and strong governance.

Can ChatGPT Give Medical Advice or Replace Healthcare Professionals?

ChatGPT can support healthcare, but it cannot replace medical expertise.

It is useful for explaining conditions, simplifying medical information, and helping users prepare for doctor visits.

Here’s what ChatGPT can and cannot do:

Will ChatGPT Replace Healthcare Professionals?

No. Doctors, nurses, and care teams remain essential for diagnosis, treatment decisions, ethical judgment, and building patient trust.

AI can support these processes, but it cannot take responsibility for them.

The Future of ChatGPT in Healthcare: What’s Next

ChatGPT’s future in healthcare is centered on becoming a more integrated, assistive tool, not an autonomous decision-maker.

With advancements in multimodal capabilities and deeper workflow integration, it will support tasks across record-keeping, communication, and knowledge management more effectively.

At the same time, increased regulation will shape how it is safely deployed in clinical environments. Its value lies in improving efficiency and clarity, but risks remain, especially misuse for medical decisions and poor data handling.

Long-term success will depend on controlled usage, strong human oversight, and compliance-first implementation across healthcare systems.

Frequently Asked Questions (FAQs)

Is ChatGPT used in healthcare?

Yes, ChatGPT is used in healthcare for documentation, research summaries, patient communication, and administrative workflows, but always under human supervision.

Do doctors use ChatGPT in real practice?

Yes, doctors use ChatGPT selectively for drafting notes, summarizing research, and patient communication, not for diagnosis or treatment decisions.

Can ChatGPT give medical advice?

ChatGPT for medical professionals can explain conditions and provide general health information, but it cannot diagnose, prescribe treatment, or replace professional medical advice.

How is ChatGPT used by medical professionals?

Medical professionals use ChatGPT for clinical documentation, research support, patient communication, and training, primarily in low-risk, non-decision-making workflows.

Is ChatGPT safe for healthcare use?

ChatGPT is safe when used with safeguards like human review, data privacy controls, and validation against trusted medical sources.

Is ChatGPT HIPAA compliant?

ChatGPT itself is not inherently HIPAA compliant; compliance depends on secure, enterprise-grade deployment with proper data handling, encryption, and access controls.

Will ChatGPT replace doctors in the future?

No, ChatGPT will not replace doctors. It supports workflows, but clinical decisions, patient care, and ethical judgment require human expertise.