Introduction

Natural Language Processing (NLP) has revolutionized the way humans interact with machines, and at the forefront of this transformation is BERT

BERT is a state-of-the-art language model developed by Google. BERT's bidirectional architecture and deep learning capabilities have paved the way for groundbreaking applications across various domains. It ranges from text summarization and sentiment analysis to machine translation and question answering.

The impact of BERT on the NLP landscape cannot be overstated. According to a report by Stanford University, BERT has achieved state-of-the-art performance on 11 NLP tasks.

Thus surpassing previous benchmarks and setting new standards in the field (Source: Stanford University, "BERT: Pre-training of Deep Bidirectional Transformers for Language Understanding," 2019).

From powering virtual assistants and chatbots to enhancing content recommendation systems and improving information retrieval, BERT's applications span a wide range of industries and use cases.

As businesses strive to provide more personalized and seamless user experiences, the demand for advanced NLP solutions continues to soar, making BERT a game-changer in the ever-evolving digital landscape.

With its remarkable capabilities and wide-ranging applications, BERT is poised to shape the future of NLP. Thus unlocking new possibilities and driving innovation across various sectors.

Let's discover how BERT LLM can enhance NLP applications with its impressive language comprehension abilities.

What is BERT LLM?

BERT LLM stands for Bidirectional Encoder Representations from Transformers Language Model. It's a neural network-based technique introduced by Google in 2018 for natural language processing (NLP) tasks.

BERT LLM revolutionized NLP by pre-training on a large corpora of text to learn contextual representations of words. Unlike previous models that relied on unidirectional context, BERT LLM considers both left and right context, enhancing its understanding of language nuances.

BERT LLM has been instrumental in various NLP applications, including sentiment analysis, question answering, named entity recognition, and machine translation. Its architecture, based on the Transformer model, allows for efficient training and scalability.

By fine-tuning on specific tasks with relatively small datasets, BERT LLM achieves state-of-the-art performance, making it a cornerstone in modern NLP research and applications.

7 Major Applications of BERT LLM in the Field of NLP

In this section, you’ll find the top seven major applications of BERT LLM in the field of NLP.

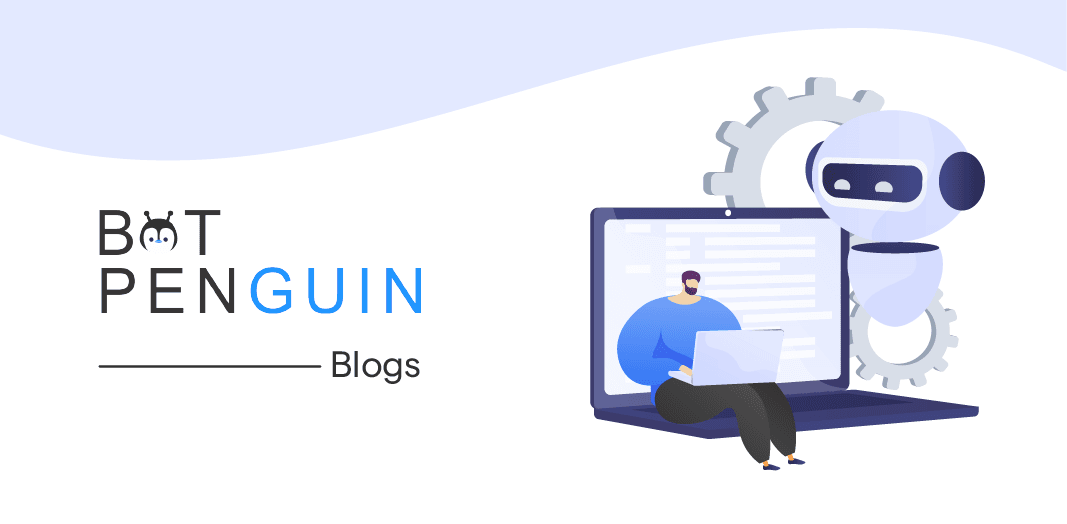

Question Answering (QA)

BERT excels at understanding context and relationships between words, making it ideal for answering questions about a given text passage.

For example, it can be used to power virtual assistants or chatbots that can answer your questions directly or search for relevant information online.

In the field of NLP, Question Answering (QA) has been revolutionized by the introduction of BERT LLM. By leveraging its advanced language comprehension abilities, BERT LLM can accurately understand the context and relationships between words. Thus enabling it to provide accurate answers to queries about a given text passage.

With BERT LLM, virtual assistants and chatbots can be powered to answer questions directly or search for the most relevant information online.

The model's deep understanding of language nuances allows it to accurately interpret queries and retrieve the most appropriate answers.

This has greatly improved user experiences by providing instant and accurate responses.

Sentiment Analysis

BERT LLM's capabilities are not restricted to question answering alone. It can also analyze the sentiment of text, whether it is positive, negative, or neutral.

This is particularly useful in applications where understanding customer reviews, social media posts, or any other textual data with sentiment analysis is crucial.

The use of BERT LLM for sentiment analysis yields remarkable results due to its ability to comprehend the overall meaning and emotional tone of a piece of text.

By considering the broader context and relationships between words, BERT LLM can accurately identify the sentiment expressed in the text.

Applying BERT LLM to sentiment analysis allows businesses to gain valuable insights from customer reviews, social media sentiments, and other textual data sources.

This information can be used to understand customer satisfaction, improve products or services, or identify emerging trends in real-time.

Implementing BERT LLM for sentiment analysis helps businesses make data-driven decisions by providing a comprehensive understanding of customer sentiments. This empowers companies to enhance customer experiences, tailor their strategies, and address any concerns or issues promptly.

Text Summarization

BERT can condense large amounts of text into shorter, more concise summaries while preserving the key points and meaning. This is valuable for summarizing news articles, legal documents, or any other lengthy text for quick understanding.

Text summarization is a crucial tool for managing information overload. BERT LLM's ability to understand language context allows it to identify important information and generate concise summaries.

By condensing lengthy texts, BERT LLM enables users to quickly grasp essential points without having to read the entire document.

Whether in the domain of news, legal, or research, BERT LLM excels at producing coherent summaries that retain the main ideas and key details.

With its capability to comprehend the foundation and nuances of a text, BERT LLM provides valuable aid in navigating and digesting large volumes of information effectively and efficiently.

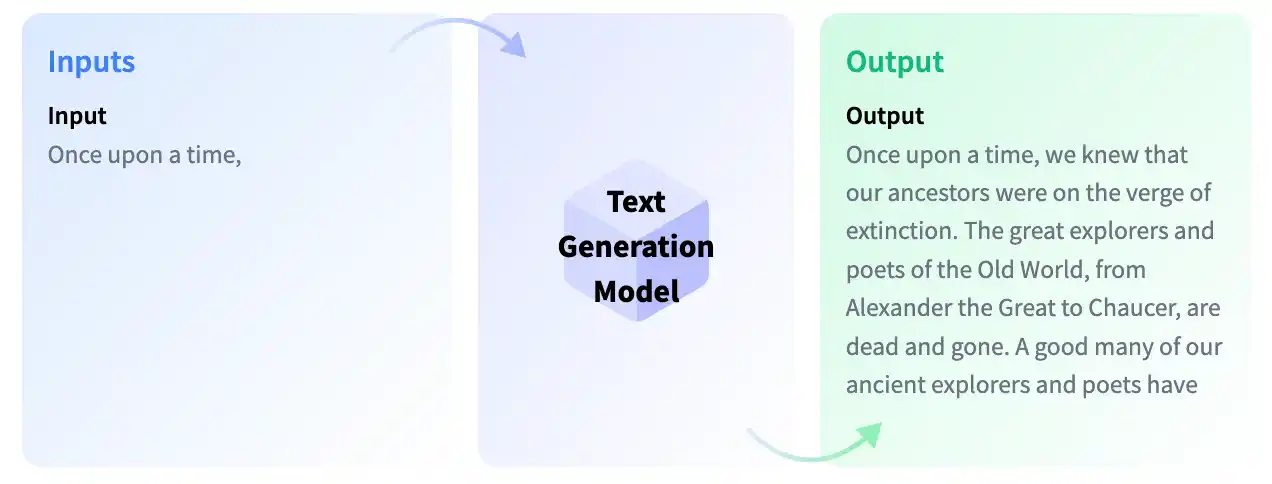

Text Generation

BERT can be used to generate different creative text formats such as poems, code, scripts, musical pieces, emails, letters, and more.

This wide range of applications extends to content creation, marketing, or even generating varied writing styles for specific audiences.

BERT LLM's natural language understanding abilities make it a versatile tool for text generation tasks. By analyzing the patterns and context of given prompts, BERT LLM can generate textual outputs that align with the desired format or style.

This opens up possibilities for personalized content generation, creative writing enhancement, and automatic document generation.

Whether it is crafting engaging marketing materials, composing personalized messages, or automating written content creation, BERT LLM empowers businesses and individuals to generate high-quality, contextually relevant text in a more efficient and streamlined manner.

Machine Translation

Machine translation is a challenging task in NLP. While several methods have been discussed and implemented to improve machine translation, BERT's fine-tuning approach for text classification has led to several advances in this field.

Even for complex languages or nuanced phrases, BERT can be fine-tuned to improve the accuracy and fluency of translated text.

The main advantage of BERT LLM for machine translation lies in its understanding of contextual language. During the translation process, the model aligns the context of a sentence or phrase to equivalent expressions in the target language.

This contextual alignment gives rise to better translations that can capture the nuances of the original text. BERT's fine-tuning approach allows for further flexibility in adapting the model to specific languages or language pairs.

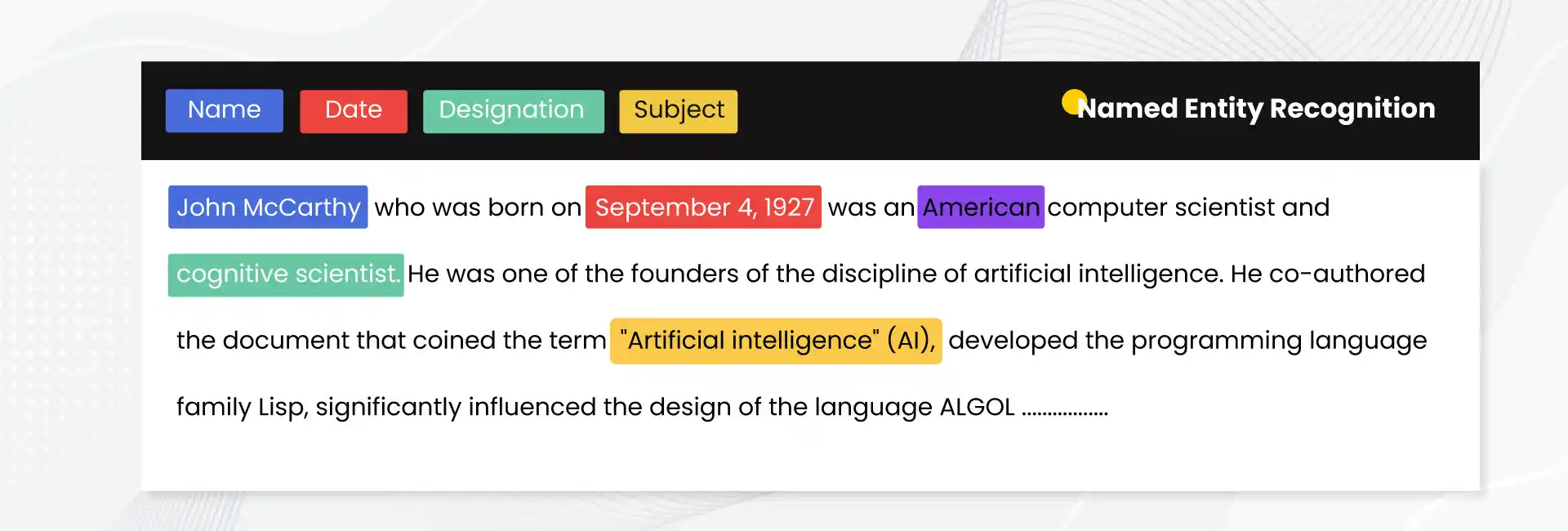

Named Entity Recognition (NER)

Named Entity Recognition (NER) is a prominent research area in NLP where the goal is to detect and classify named entities such as people, places, organizations, or dates in unstructured text.

NER has implications for a variety of tasks in NLP, such as information extraction, data analysis, or knowledge graph construction.

BERT LLM's ability to comprehend the context of a sentence or paragraph makes it useful for named entity recognition.

BERT LLM can generate contextualized representations of words or phrases, which can help to identify and classify named entities more accurately. In NER tasks, BERT LLM can learn the patterns and relationships between words that can indicate the presence of named entities.

BERT LLM can also recognize newly introduced or unusual named entities by leveraging its understanding of semantic relationships between words.

Suggested Reading:

BERT LLM vs GPT-3: Understanding the Key Differences

Natural Language Inference (NLI)

Natural Language Inference (NLI) is the task of determining the relationship between two sentences, whether they entail each other, contradict each other, or are neutral. BERT LLM can be fine-tuned for this task, resulting in impressive results.

This is useful for a variety of tasks, including fact-checking, identifying fake news, or improving chatbots' ability to understand complex conversations.

One of the key advantages of BERT LLM for NLI is its ability to contextualize words. BERT LLM generates contextual representations for words, which allows for a better understanding of their meaning and relationship to other words.

By leveraging this contextual information, BERT LLM can determine the relationship between two sentences more accurately.

Conclusion

In conclusion, BERT (Bidirectional Encoder Representations from Transformers) LLM is a powerful tool for natural language processing (NLP).

Its applications in NLP are widespread, including Natural Language Inference (NLI), Named Entity Recognition (NER), sentiment analysis, question answering, and text classification.

BERT LLM's ability to generate contextualized representations of words and phrases has revolutionized NLP. As further research is conducted and more advancements are made, it's expected that more groundbreaking applications of BERT LLM will be discovered, making NLP more accurate, efficient, and useful than ever before.

Leveraging its bidirectional context understanding, BERT has significantly improved the accuracy and efficiency of NLP models.

Furthermore, a study by Gartner estimates that by 2025, natural language processing will be a standard component of 90% of modern applications (Source: Gartner, "Gartner Top Strategic Technology Trends for 2023," 2022).

The versatility of BERT LLMs makes them invaluable tools for professionals and enthusiasts in NLP, machine learning, and artificial intelligence.

Data scientists, NLP researchers, developers, and industry professionals seeking to harness the power of BERT for their projects can explore its applications across different domains.

BERT LLMs represent a pivotal advancement in NLP, offering unparalleled capabilities and opening doors to new possibilities in understanding and processing human language.

Frequently Asked Questions (FAQs)

What are some additional applications of BERT LLM?

In addition to NLI and NER, BERT LLM is widely used in sentiment analysis, question answering, text classification, machine translation, and text generation tasks, enhancing accuracy and understanding of natural language.

What are the advantages and disadvantages of BERT?

BERT has the advantage of contextual word understanding, improving performance in NLP tasks.

However, it requires significant computation power and is computationally expensive. Additionally, BERT may have difficulty with out-of-vocabulary words and lacks real-time processing capabilities.

What are the features of BERT?

BERT's features include bidirectional training, attention mechanisms to capture word relationships, transformer architecture, and the ability to handle masked language modeling and next sentence prediction tasks. BERT's key feature is its ability to generate contextualized word representations.

What are the different types of BERT?

Different types of BERT models include BERT-Base, BERT-Large, and BERT-Multilingual.

BERT-Base has 12 layers and 110 million parameters, while BERT-Large has 24 layers and 340 million parameters. BERT-Multilingual is trained on multiple languages and enables cross-lingual transfer learning.

Is BERT used for feature extraction?

Yes, BERT can be used for feature extraction. By using pre-trained BERT models and extracting the last hidden layer's activations, BERT can capture contextualized representations of words, which can be used as features for downstream NLP tasks.

How to use BERT for a chatbot?

To use BERT for chatbot applications, the model can be fine-tuned on conversational data.

By training BERT on question and answer pairs, it can learn to generate more contextually appropriate responses, improving the chatbot's conversational abilities and understanding of user queries.

How does BERT LLM improve chatbot conversations?

BERT LLM's understanding of contextual language allows chatbots to comprehend complex conversations better.

By fine-tuning BERT LLM for NLI, chatbots can provide more accurate and meaningful responses, enhancing the user experience.