What is Algorithmic Bias?

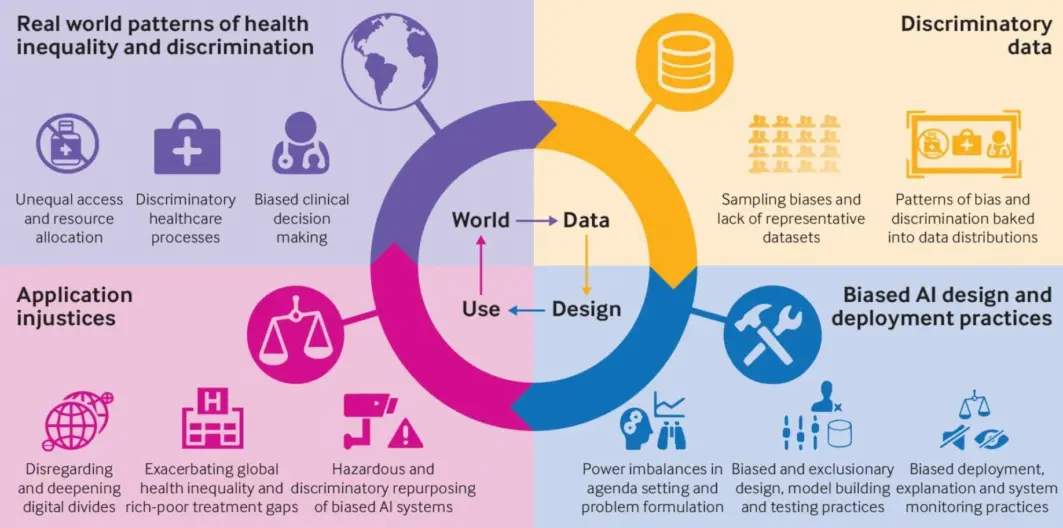

Algorithmic bias, or AI bias, occurs when a computer system reflects the implicit values of the humans who are involved in coding, collecting, selecting, or using data to train the algorithm. This system bias can replicate and propagate existing biases, often unintentionally or unconsciously, resulting in unfair outcomes or discrimination.

What Algorithmic Bias Isn't

Algorithmic bias isn't the deliberate introduction of prejudice into artificial intelligence systems. While it may occur due to human oversight or discrimination, it isn't necessarily a conscious effort to skew outcomes. However, the effects can be quite impactful and harmful, especially when AI systems make decisions without human intervention.

Understanding the Causes of Algorithmic Bias

One of the primary causes of algorithmic bias is biased data. If the training data used to develop an algorithm isn’t representative of the intended users or the realistic scenarios where the AI will operate, the algorithm can generate skewed results.

Prejudiced Programming

Algorithms reflect the biases of their human creators. If an algorithm's creators consciously or unconsciously carry their prejudices into the programming process, this can result in biased outcomes.

Interpretation Bias

Biased interpretation happens when an AI's output is interpreted wrongly or is over-interpreted, leading to decisions that accentuate prejudice.

Feedback Loops

Algorithmic bias can be perpetuated and made worse by feedback loops. Because algorithms learn and evolve, biases within input or output can become magnified over time.

Consequences of Algorithmic Bias

Algorithmic Bias can lead to the reinforcement of societal stereotypes which can be damaging to marginalized groups.

Discriminatory Decisions

Algorithms are used to predict individual behavior in a multitude of situations, from job applications to loan approvals. When these predictions are based on biased data or coded preferences, it can lead to discriminatory outcomes.

Polarization

In the world of content recommendation, algorithmic bias can lead to filter bubbles, reinforcing a user’s existing opinions or biases, and contributing to societal polarization.

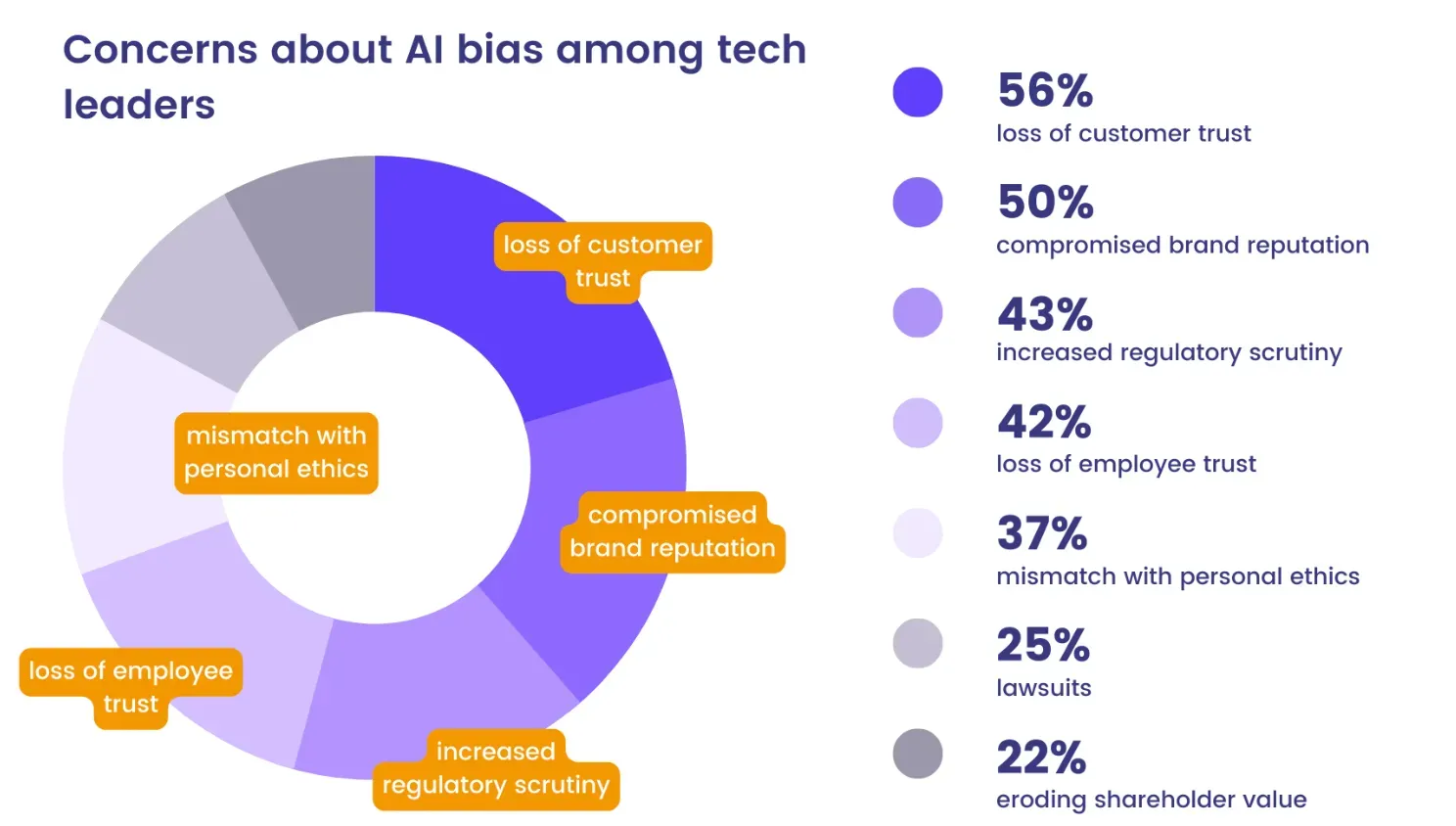

Eroding Trust in AI Systems

Algorithmic biases can undermine public trust in Artificial Intelligence and its use in decision-making.

Mitigating Algorithmic Bias

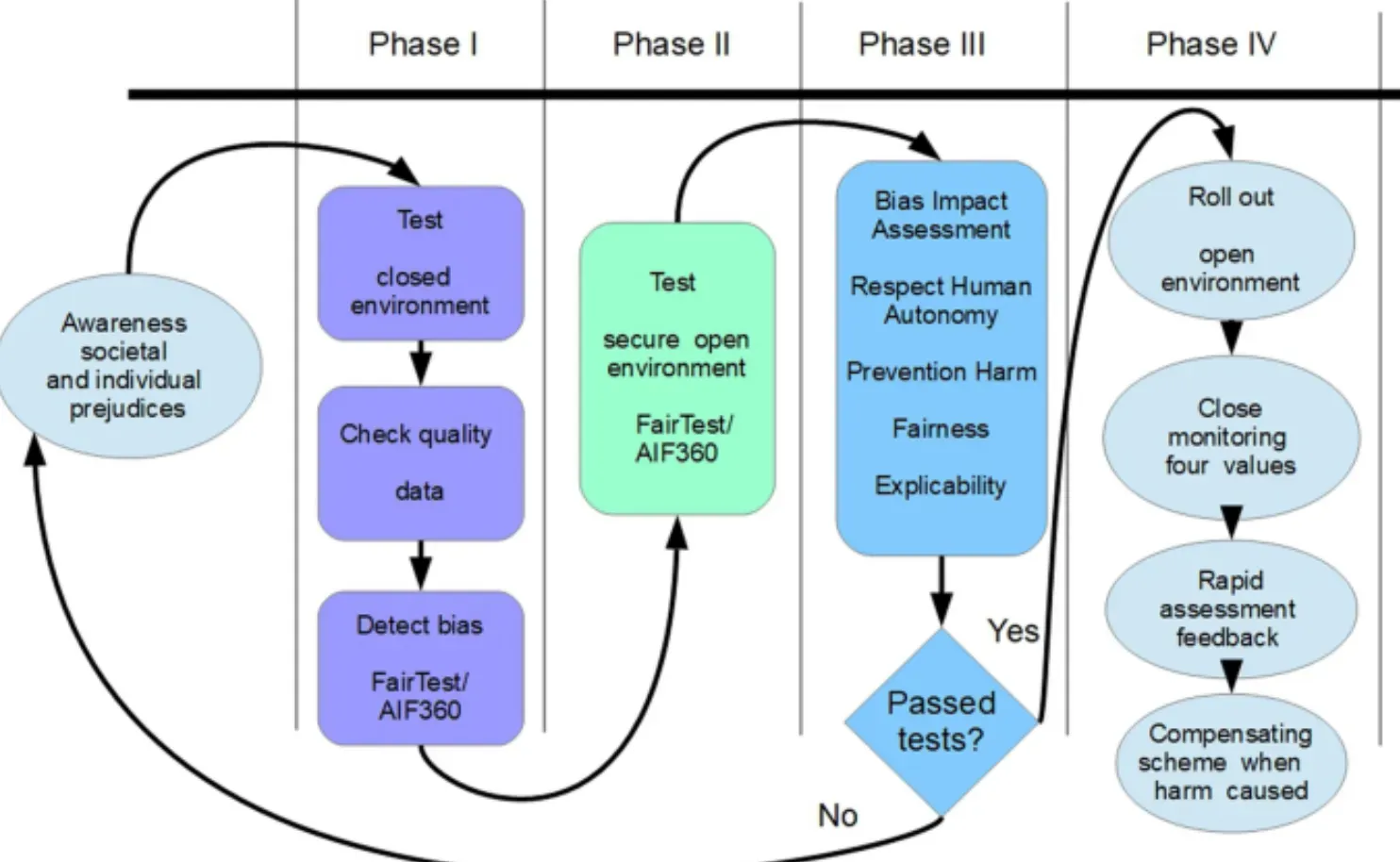

Ensuring that the data used to train algorithms is representative of the individuals or scenarios the AI will predict or make decisions for helps limit bias.

Transparency in Algorithm Design

By making the design and decision-making process of algorithms transparent, it's easier to identify and correct bias.

Continual Auditing

Frequent audits allow for continually assessing and addressing bias in AI algorithms, considering that bias can creep in at many stages of an AI system's lifecycle.

Inclusion and Diversity in AI Development Teams

Diverse development teams can bring a broader range of perspectives to the design and implementation of algorithms, potentially catching biases that a more homogeneous team might miss.

Ethics in Alleviating Algorithmic Bias

AI developers must ensure that they follow principles of ethical AI design to minimize bias.

Regulatory Oversight

Regulatory oversight can provide accountability and enforce guidelines on how AI systems are designed and utilized to limit bias-driven harm.

Explainable AI

AI should be designed so that its decisions can be understood and challenged by the people those decisions affect.

Right to Redress

There must be a robust appeals or redress mechanism for those negatively impacted by an AI decision, and efforts should be made to mitigate harm.

Suggested Reading:

Algorithmic Probability

Algorithmic Bias in Everyday Life

Algorithmic bias can affect the ads we see online. Existing biases can lead to situations where certain jobs or opportunities are advertised to specific gendered or racial groups disproportionately.

Biased Policing

Predictive policing algorithms can reinforce and exacerbate existing biases, resulting in targeted over-policing of certain neighborhoods or groups.

Healthcare Disparities

Biased algorithms can lead to healthcare disparities, with AI tools disproportionately misdiagnosing diseases or incorrectly prioritizing care based on gender, race, or other societal biases reflected in the training data.

Frequently Asked Questions (FAQs)

What is Algorithmic bias and its example of causing real-world harm?

Algorithmic bias occurs when an algorithm produces unfair or inaccurate results due to biases in the data it's trained on.

This can lead to discrimination in areas like loan approvals or job applications

A significant example would be the use of predictive algorithms in the criminal justice system which have been found to over-predict recidivism rates for Black defendants compared to white defendants.

Are algorithmic bias and discrimination the same thing?

Not necessarily. Discrimination generally involves intent, while algorithmic bias can occur unintentionally due to issues in data, programming, or model assumptions. However, algorithmic bias can lead to discriminatory outcomes.

Can algorithmic bias be completely eliminated?

It's difficult to completely eliminate bias. However, proper measures, such as fair representation of data, continual auditing, and diverse development teams can help to significantly reduce bias.

Why is it important to address algorithmic bias in AI?

AI is increasingly being used to make or inform decisions that affect people's lives, from hiring decisions to healthcare. It is, therefore, important to ensure these decisions are fair and do not discriminate against or disadvantage certain individuals or groups.

Are there any regulations addressing algorithmic bias?

Currently, there is limited legislation specifically targeting algorithmic bias. However, with increasing awareness of the issue, regulatory oversight is likely to grow in the future.