Introduction

The market for generative artificial intelligence, which was valued at $8.2 billion in 2021, is expected to increase at a CAGR of 32% from 2022 to 2031 to reach $126.5 billion.

And here is the world of the latest buzzword in AI tech transformers, where cutting-edge technology is reshaping AI.

In this blog, we'll unravel the wonders of transformers, revolutionizing how machines learn and process information. Transformers capture context and relationships between data elements efficiently with a parallelized architecture. They excel in NLP tasks.

Transformers find applications in chatbots, machine translation, sentiment analysis, image recognition, object detection, and more. Tech giants like Google, Facebook, and OpenAI drive innovation in this field.

We'll guide you through implementation steps, the availability of pre-trained models and libraries, and challenges like training time, computational resources, and interpretability. But the future holds promise, with architectural advancements and transformative impacts across industries.

Join us on this extraordinary adventure as we witness the power of transformers. Brace yourself for the AI revolution—it's time to dive in!

What are Transformers?

In the context of AI, transformers refer to a revolutionary machine learning model that differs from traditional approaches. Unlike conventional models that rely on sequential processing, transformers employ a parallelized architecture to process data more efficiently. This unique structure allows transformers to capture the context and relationships between different data elements, making them particularly adept at tasks involving natural language processing (NLP).

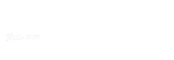

How do Transformers Work?

To understand how transformers work, let's closely examine their architecture and components. A transformer model's essential components are self-attentional mechanisms. These techniques allow the model to generate its output by concentrating on various portions of the input sequence.

The transformer may evaluate the significance of additional factors and acquire meaningful representations of the input data thanks to self-attention.

An encoder and a decoder make up the transformer architecture. The encoder processes the input data, while the decoder generates the output based on the encoded information. Each encoder and decoder layer in a transformer contains sub-layers, including self-attention layers and feed-forward neural networks.

These sub-layers work collaboratively to process and transform the input data, resulting in rich, context-aware representations.

Why are Transformers Important in AI?

Transformers have gained immense importance in AI due to their numerous advantages over traditional models.

Here are a few key reasons why transformers have emerged as game-changers in the field:

Generative AI helps in improved contextual understanding

Transformers excel in capturing the context and dependencies between different elements in a sequence. This ability makes them highly effective in machine translation, sentiment analysis, and text summarization tasks. By understanding the context, transformers can generate more accurate and meaningful outputs, leading to better overall performance.

Long-Term Dependency Handling by Transformers

Traditional models need help with capturing long-term dependencies in sequences. However, transformers as generative ai overcome this limitation through their self-attention mechanism. This mechanism allows the model to attend to any part of the input sequence, irrespective of its position. As a result, transformers can capture long-term dependencies effectively, making them well-suited for tasks that involve long-range relationships.

Parallel Processing can be done more effectively by generative AI

Transformers leverage parallelization to process data more efficiently. Unlike sequential models, which process data one element at a time, transformers can process all aspects simultaneously. This parallel processing capability leads to faster training and inference times, enabling real-time applications of transformer models.

Transfer Learning and Pre-training Transformers

Another significant advantage of transformers is their compatibility with transfer learning and pre-training. Pre-training transformer models on large corpora of unlabeled data allows them to learn general representations of language. These pre-trained models can then be fine-tuned on specific downstream tasks, requiring less labeled data and leading to improved performance.

Where are Transformers Used?

Transformers generative ai has found applications in various industries, enabling remarkable advancements in AI. Let's explore some key areas where transformers are making a significant impact.

NLP Applications

- Chatbots: Transforming customer service experiences, chatbots powered by transformers can understand and respond to natural language queries effectively.

- Machine Translation: Transformers have revolutionized machine translation, enabling more accurate and contextually relevant translations between languages.

- Sentiment Analysis: By leveraging transformers, sentiment analysis models can accurately determine emotions and opinions expressed in text, making it valuable for market research and social media analysis.

Computer Vision Applications

- Image Recognition: Transformers have proven to be highly effective in image recognition tasks, allowing machines to identify and categorize objects within images accurately.

- Object Detection: Transformers have enabled breakthroughs in object detection, allowing AI systems to detect and localize multiple objects within images or videos.

Recommendation Systems

- Personalized Content Recommendations: Transformers are used to power recommendation systems, delivering customized content suggestions to users based on their preferences and behavior.

Who is using Transformers?

Several leading tech companies and research institutions are at the forefront of utilizing transformers in their AI projects. Let's examine some influential players and discover how transformers drive application innovation.

Tech Giants

Google: Google has been leveraging transformers to enhance search algorithms, improve language understanding, and power various products such as Google Translate.

Facebook: Facebook utilizes transformers to enhance its news feed algorithms, optimize content ranking, and improve user experiences.

Research Institutions

OpenAI: As a leader in AI research, OpenAI has contributed significantly to the development of transformers, advancing their capabilities and exploring their potential across various applications.

Successful Case Studies and Applications

Transformers have already demonstrated remarkable success in real-world scenarios. Here are two case studies that showcase the power of transformers in AI applications:

Medical Diagnosis: Transformers have been used to develop models that can assist doctors in diagnosing diseases based on medical images, leading to more accurate and efficient diagnoses.

Autonomous Driving: Transformers have been applied in autonomous driving systems to improve object detection and perception, enabling safer and more reliable self-driving cars.

How to Implement Transformers?

Implementing transformers in AI projects involves several key steps. Here's an overview of the implementation process:

- Data Preparation: Collect and preprocess the data required for training the transformer model. This includes cleaning, organizing, and formatting the data.

- Model Architecture: Select an appropriate transformer architecture based on the specific task, such as BERT, GPT, or T5.

- Training: Train the transformer model using the prepared data. This typically requires significant computational resources and can take considerable time.

- Fine-tuning: Fine-tune the pre-trained transformer model on a task-specific dataset to improve its performance and adapt it to the application's specific requirements.

- Evaluation and Deployment: Evaluate the trained model's performance using appropriate metrics and deploy it in a production environment for real-world use.

Availability of Pre-trained Transformer Models and Libraries

The availability of pre-trained transformer models and libraries has made implementing transformers more accessible and efficient. These resources, such as Hugging Face's Transformers library, provide a wide range of pre-trained models that can be readily used or fine-tuned for specific tasks, saving time and computational resources.

Challenges and Limitations of Transformers

There are various challenges and Limitations of Transformers.

Training Time is lengthy for generative AI

When it comes to transformers, time is of the essence. Training these models can be a lengthy process due to their complexity and vast amount of parameters. However, researchers and engineers constantly optimize training algorithms and leverage parallel computing techniques to reduce training time and enhance efficiency.

Computational Resources

Transformers as generative AI are hungry beasts when it comes to computational resources. Their appetite for processing power and memory can challenge organizations with limited resources. But fear not, as advancements in hardware technology, such as specialized AI accelerators and cloud computing, pave the way for more accessible and cost-effective transformer deployments.

Interpretability of generative AI

One of the ongoing challenges with transformers is their interpretability. Due to their complex architectures and vast number of parameters, understanding how these models arrive at their predictions can be challenging. Researchers are actively exploring methods to enhance interpretability, such as attention visualization techniques and model explanations, enabling users to gain insights into the decision-making process of transformers.

Future Trends in Transformer Technology

Discussing the future trends in transformer technology is like going beyond the horizon of possibilities.

Advancements in Transformer Technology in the field of generative AI

The field of transformer technology is a constantly evolving one. Researchers are actively pushing the boundaries, exploring novel architectures, and developing more efficient training algorithms. Breakthroughs such as Performer models, which leverage randomized attention mechanisms, and spare transformers, which reduce computational requirements, propel the field forward, opening new doors for AI applications.

Transformers Shaping the Future of AI

As transformers advance, their potential impact on various industries becomes more evident. Transformers empower businesses with enhanced natural language processing, computer vision, and recommendation systems from healthcare to finance, retail to entertainment. Transformers will change how we interact with technology because of their capacity to comprehend and produce language similar to what a human would write, evaluate photographs, and offer individualized recommendations.

Conclusion

Transformers have emerged as game-changer in AI. Despite training time, computational resources, and interpretability challenges, transformers are pushing the boundaries of what AI can achieve. With ongoing advancements and research, the future of transformer technology looks promising, with exciting opportunities for innovation and growth.

So, get ready to embrace the power of transformers as generative AI and witness their transformative impact on our world.

Remember that we are only beginning to explore the vast possibilities of transformers, and their journey has just begun. Keep an open mind, keep up with current events, and prepare for the AI revolution powered by transformers!

Are you tired of spending excessive time and resources on customer support and lead generation? Look no further! BotPenguin is here to transform your business and supercharge your customer interactions.

Imagine having a chatbot that understands and responds to your customers' queries in a human-like manner. With BotPenguin's cutting-edge NLP and AI technology, you can provide instant and accurate assistance, round-the-clock. Say goodbye to long response times and hello to satisfied customers!

Frequently Asked Questions

What are Transformers in the context of AI Technology?

Transformers are a type of neural network architecture that has gained significant attention in AI research. They excel at processing sequential data, such as natural language, and have been instrumental in achieving state-of-the-art performance in various tasks.

How do Transformers differ from traditional neural networks?

Unlike traditional neural networks that process data sequentially or in fixed-length windows, Transformers leverage self-attention mechanisms to process entire sequences simultaneously, capturing global dependencies and improving context understanding.

What are the key applications of Transformers in AI?

Transformers have been successfully applied in a wide range of AI tasks, including machine translation, text generation, sentiment analysis, question answering, image recognition, and speech recognition, among others.

Can Transformers be used for language translation?

Absolutely! Transformers have revolutionized machine translation by enabling more accurate and fluent translations. Their ability to capture context and dependencies across long sentences has significantly improved translation quality.

How do Transformers handle long-range dependencies in data?

Transformers address long-range dependencies through self-attention mechanisms. This allows them to assign higher importance to relevant words or tokens while processing a particular word, effectively capturing long-range relationships within the sequence.

What are the advantages of using Transformers in natural language processing?

Transformers excel in natural language processing tasks due to their ability to model context, handle long sentences, capture dependencies, and generate coherent and contextually relevant responses, leading to more accurate and human-like language understanding.

Can Transformers generate human-like text?

Yes, Transformers have demonstrated impressive capabilities in generating human-like text. By training on large datasets and employing advanced language models, Transformers can generate coherent and contextually relevant text across various domains.

Are Transformers computationally expensive compared to other models?

Transformers are known for their computational complexity, as they require processing all elements of a sequence simultaneously. However, advancements in hardware and techniques like model parallelism and efficient attention mechanisms have made training and inference with Transformers more feasible.

Can Transformers be used in computer vision tasks?

Yes, Transformers have shown promising results in computer vision tasks as well. Vision Transformers (ViTs) have been developed to process image data by treating it as a sequence of patches, achieving competitive performance in tasks like object detection and image classification.

How can I leverage Transformers in my AI projects?

To leverage Transformers, you can use pre-trained models such as BERT, GPT, or T5, fine-tune them on your specific task and data, or explore open-source libraries like Hugging Face's Transformers, which provide access to a wide range of pre-trained models and utilities for easy integration.